Search the Community

Showing results for tags 'morph'.

-

I am trying to morph a tetrahedral vellum soft body between two shapes using an animated rest blend. In my setup rest blend works for cloth but does not work when using tet soft-bodies. I've tried troubleshooting by tet conforming the rest blend target but this does not fix the issue. Is there a step that I am missing or is there a better way to accomplish morphing tet soft-bodies? VellumRestBlend_v003.hiplc

-

Hello, I'm trying to do a particle morph effect and all I want to do is to have the animated data imported into the pop network. The effect is from a tutorial by Nicolas Donatelli (https://www.youtube.com/watch?v=B4Zz8Erc09E). Morphing works great but only works on a static geometry and I would like the animated data to be imported as well. It probably an easy solution, would be great if someone could figure it out. Attached is my HIP file with animated geo. I've tried the pop attract node which does the job but it can be quite tricky to control. ParticleMorph_WIP_1.hiplc

-

Does anyone know if it is possible in H to conform one humanoid mesh to another? I'm really looking for a procedural method for aligning dimensions and orientation of a destination mesh with a base reference source mesh. I don't think I need a morph SOP, because the destination needs to retain some (or perhaps most) of its own identity, but needs to conform the general object space that the source mesh occupies. Blender offers a sort of shrink wrap function that projects vertices from source to destination, but I'm not convinced this will do what I'm after. Any ideas on this from the community? Just a point in a general direction would be a good help. I'm quite new to H, so I know there is a steep hill ahead and I've got my hiking boots on! Thanks.

-

Hello everybody, I was wondering if anybody could explain to me how I go about creating morphing animations like the following in houdini as I am fairly new. I appreciate your time and help in advance. Link to the reference: https://www.instagram.com/p/CGF53Vdnh92/?utm_source=ig_web_copy_link

-

Hey guys, how can I do something like this but within a pop network? Thx

- 7 replies

-

- particle

- transformation

-

(and 1 more)

Tagged with:

-

Hello everybody, I'm trying to figure out an effect done by Simon Homedal, here are pics of the process, seems to be a subdivided triangular mesh that animates into infinite fractal, could be this done subdivisions, extrusions, blend, for each loops and solver? if so, how can you manage so houdini doesn't crash when getting smaller? putting a end value on the for each node? I watched Entagma video on fractal and for each divisions, now I'm trying to figure out how to combine all this stuff. Reference pictures: Here is the video (second 0:28) Also Simon kinda explain the method on IAMAG 2016 Class at min 32:20 He said that he uses a polygon, subdivided, and then animate with a blend shape, then build all the stuff from there, the screen record is available at u$d 100 but he only shows 2 quick houdini screens so its kinda expensive for that amount of info in my opinion (althoug the overall talk is great) . At 34:20 is a similar effect again with a sphere Any info will be great, in mean time will keep thinking and playing with houdini. Thanks!

-

I have quite a simple question but haven't been able to figure it out yet. Using attribfrommap I have particles getting generated from a texture (e.g. circle). How can I attract/morph these particles to another texture (e.g. rectangle)? Thanks

-

Hi guys, Far far far away, in a galaxy... there was someone that had a tutorial online about morphing a text into a logo. He did some font fixing, creat a trail of the morph, some rendering mantra stuff.. It was coming out at the same time as the CMIVFX ParticleFx tutorial. I know its a old one but I am looking for it but have no clue what the name is / or which company. Anyone? Im just looking for that specific name, not a solution to fix something ;-) thnx

-

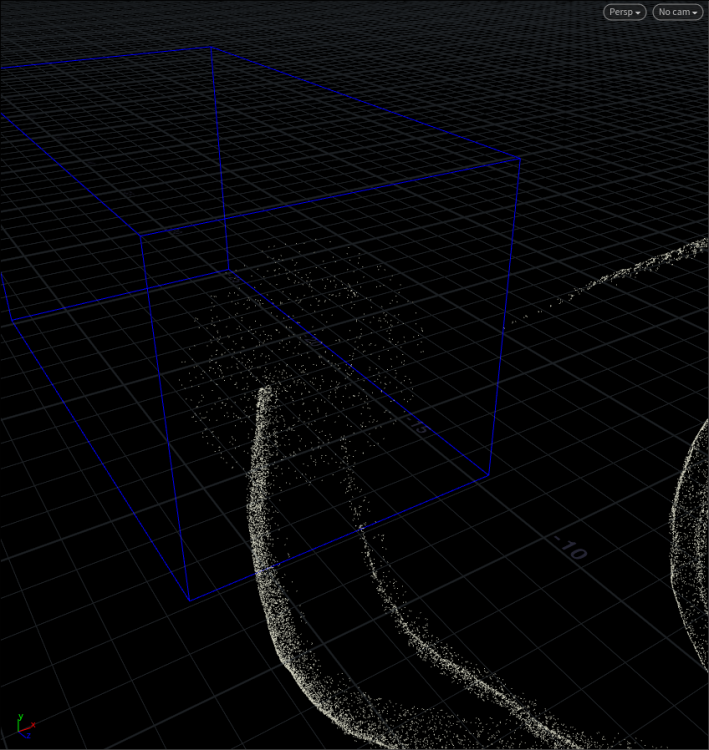

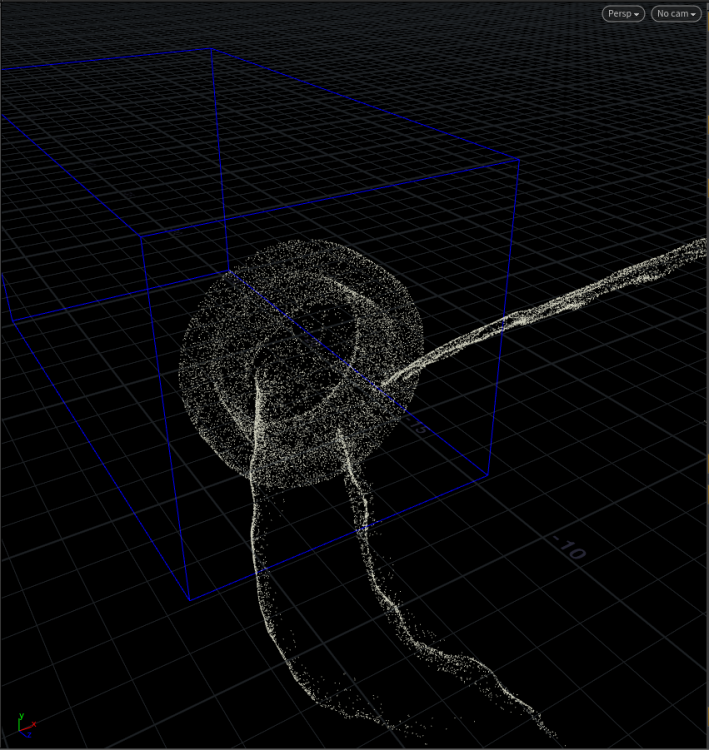

Hi, I found this setup for particle morph: float strength = ch("strength"); float arrivedistance = ch("arrivedistance"); int goalpt = @id % npoints(@OpInput3); vector goalpos = point(2, "P", goalpt); f@goaldistance = distance(goalpos, @P); vector goaldir = normalize(goalpos - @P); @v = goaldir * strength * fit(@goaldistance, 0, arrivedistance, 0, 1); Setup is very nice but it would be super to add functionality. Im new to vex. Is it possible to move particle into the nearest point on the target, because at the moment (I attached screenshots) particles morph in random way (evenly fills the entire object )? It would be perfect to fill object starting from the closest point. I will be very grateful for your help. Regards, Pawel

-

I'm looking to transform one shape of packed RBD's into another. I've looked at the forum and a few of the examples. Specially the lego car which is awesome but they all tend to transform the same set of Packed RBD's from a rest shape to complete form. I am looking to transform from one shape of object to another shape of object. this is a pure Position and Rotation transformation and I think I'm just not understanding the way rotate work to show this I've made a simple houdini file. Any Help to better understand this would be appreciated. packedgeo_shapetoShape.hip

-

I'm looking to transform one shape of packed RBD's into another. I've looked at the forum and a few of the examples. Specially the lego car which is awesome but they all tend to transform the same set of Packed RBD's from a rest shape to complete form. I am looking to transform from one shape of object to another shape of object. this is a pure Position and Rotation transformation and I think I'm just not understanding the way rotate work to show this I've made a simple houdini file. Any Help to better understand this would be appreciated.

-

I'm looking to transform one shape of packed RBD's into another. I've looked at the forum and a few of the examples. Specially the lego car which is awesome but they all tend to transform the same set of Packed RBD's from a rest shape to complete form. I am looking to transform from one shape of object to another shape of object. this is a pure Position and Rotation transformation and I think I'm just not understanding the way rotate work to show this I've made a simple houdini file. Any Help to better understand this would be appreciated.

-

here's a quick and clean method to morph geometry with the new Point SOP in H16...naturally, point counts between objects must equal for sensible effect. Morpher.hipnc

-

Hey all, I'm a compositing student but for a current group project we are to make a bumper and ended on doing it in Houdini because it just looks best. So I don't have much experience in Houdini before this except watching some courses/tutorials and playing around very little. The learning curve is quite steep and I've run into several snags. First of all I followed this tutorial to get the first morph/transition and things panned out pretty well. However I got a third model and maybe even a fourth and I don't know how to add these into the mix. In my current file there is just a simple blendshapes for the third model but it's quite uninteresting. That's it for starters at least. Other than that I'm trying to figure out how to change the colors between each model as well. The project file is probably pretty dank since it's my first project in Houdini. I really love the program and want to continue working in it! Hopefully someone out there could take some time to look it over and give some input. Attached is a .rar with .hip and the .fbx camera as well as x3 .obj models used. bumper.rar

-

Hi there, I am trying to build a propagation driven blend / morph effect to change the look of a geometry. But I am already stuck by transfering the atrributes between the geo A and geo B. I managed to build a set up where I change the geometry by using a attribute vop and subtracting B minus A. Here come the BUTs couldn´t animate it by keyframing the Bias of a mix node. couldn´t use a propagation effect with scattert points In the second set up I tried to change the set up by using a scatter node insted of the normal geometry. It works but it seems that the point order isn´t the same and while changing the bias of the mix node all the points change it´s position?! In the scatter node I unchecked "Rondomize Point Order" but without any effect. I also tried to use set up with the change from lowres mesh to a highres mesh, but here I couldn´t get solution with the scattered point, because of the primitive Attribute node in the second Vop. I also tried to recreate it with point cloud node but failed as always. I am pretty new to Houdini and I know that I am missing a lot of foundation knowledge, it would be great if someone could give me a hint what I am doing wrong. Thanks and all the best Dennis 170422_position_transfer_001.hiplc

-

- vop

- attributes

-

(and 2 more)

Tagged with:

-

Hey, while waiting for a sim at work Im playing with hudini and trying to reproduce some effect aixponza did for nike presto. Some good ideas how they did this effect on second 13? I attached a very primitivesetup, maybe someone has some input.

-

I want to have a puddle of water/lava that starts to rise up to create a character standing up that i have animated, i have tried doing it in reverse by melting him and playing it backwards but it looks wrong, i want to learn how to do it properly. I am looking for any good tutorials that might help me? Any help would be amazing! Thanks

-

Hey, while waiting for a sim at work Im playing with hudini and trying to reproduce some effect aixponza did for nike presto. Some good ideas how thez did this effect on second 13? I attached a very basic setup, maybe someone has some input. Morph.hip

-

Hello everyone, I am trying to achieve a similar effect likes this by Ivan: On the sidefx forums, Ivan mentions that he creates two fields, one to attract the particles and one to keep the particles on the surface. I managed to create the force field to attract the particles but i cant find a way to create a field to keep the particles inside the geometry and form the shape. Any tips or help is highly appreciated.

-

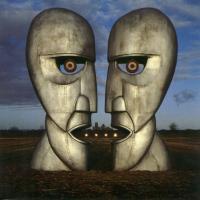

Hey guys, Just watched the awesome work of Aixsponza and I'm wondering how to achieve this kind of effect, I let the video below and some still frames first Are they using vdbs for morph? and attribute transfer to drive the transformation? as for the triangles effect, a poly reduce with poly extrude or some point vop to extrude them? I really love this kind of morph transformations and any insight on how to aproach will be helpful Thanks!

-

Hi everyone, I am trying to create flower blooming animation in Houdini. There are five variety of flowers with different shapes. What would be the best approach to create such a animation? I am looking to animate something like this :

- 5 replies

-

- blooming

- deformation

-

(and 3 more)

Tagged with: