Search the Community

Showing results for tags 'millions of points'.

-

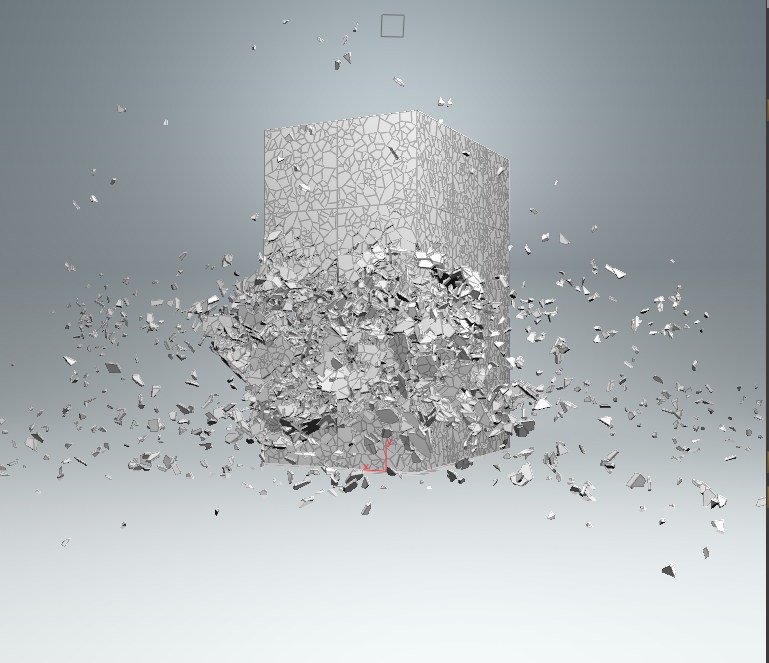

Hello, I recently went through Steven Knipping's tutorial on Rigid Bodies (Volume 2). And now I have a nice scene with a building blowing up. The simulation runs off low-res geometry and then Knipping uses instancing to replace these parts with high-res geometry that has detailing in the break-areas. The low-res geometry has about 500K points as packed disk primitives so it runs pretty fast in the editor. Also, it gets ported to Redshift in a reasonable amount of time (~10 seconds). However, when I switch to the high-res geometry, as you might guess, the 10 seconds turn into around 4 minutes, with a crash rate of about 30% due to memory. When I unpacked it I think it was 40M points, which I can understand are slow to write/read in the Houdini-Redshift bridge, but is there no way to make Redshift use the instancing and packed disk primitives? My theory kind of is that RS unpacks all of that and that's why it takes forever, because when I unpack it beforehand, it works somewhat faster - at least for the low-res. The high-res just crashes. I probably don't understand how Redshift works, and have wrong expectations. It would be nice if someone could give me an explanation. Attached you'll find an archive with the scene with one frame (Frame 18) of the simulation included as well as the folder for the saved instances of low- and high-res geometry. Thanks a lot for your help, Martin (Here's the pic of the low-res geometry, the high-res is basically every piece bricked down into ~80 times more polygons.) Martin_RBDdestr_RSproblem_archive.zip

- 16 replies

-

- instancing

- millions of points

-

(and 2 more)

Tagged with: