Search the Community

Showing results for tags 'instancing'.

-

In Solaris, I’m using RenderMan Lama shader displacement via Material Library. Displacement is evaluated at render time and renders correctly. When scattering rocks with Asset Instancer, instancing happens on the pre-displaced SOP geometry, so once shader displacement is applied the instances float or intersect the surface. Questions: Can Asset Instancer evaluate or reference render-time shader displacement? Is there any supported way to procedurally link Material Library displacement parameters to instancing so lookdev changes stay aligned? Or is SOP-level proxy displacement / ray projection the expected production workflow? I’m aware render-time displacement isn’t available at SOP level; mainly looking to confirm best practice, not hacks. Houdini 19.5/20.x

-

Found an annoying problem recently in regards to rendering .ass files with instanced packed alembics. So the basic set up is packed alembic geometries being sent through a "Copy to Points" sop and are packed and instanced. These are then being manipulated a bit, and then being sent through an Arnold ROP to export a .ass file. These .ass files are then being sent to Maya to render and they have rendered great. The issue I have discovered is after I pack and instance my packed alembics. I added a little processing to the "transform" prim intrinsic to give the instances a little rotation and scale the instance from 0 to 1. Rotating the instances through the "transform" prim intrinsic works great and renders fine. However, scaling the "transform" prim intrinsic matrix causes the render to send the instances to go all over the place randomly. The Houdini viewport shows the instances scaling correctly, but after exporting the .ass and rendering in Maya the instances go all over the place randomly. This is all I am doing to scale the instances. f@grow is just an animated attribute to drive the scaling of the instances. matrix3 transform = primintrinsic(0,"transform",@primnum); matrix3 scaleMatrix = ident(); scale(scaleMatrix,set(f@grow,f@grow,f@grow)); setprimintrinsic(0,"transform",@primnum,transform*scaleMatrix); Does anyone have any experience with this? I cant upload a file just yet because its a work thing, but its possible I can recreate it. This is really driving me crazy so any suggestions would be great to just try something fresh!

-

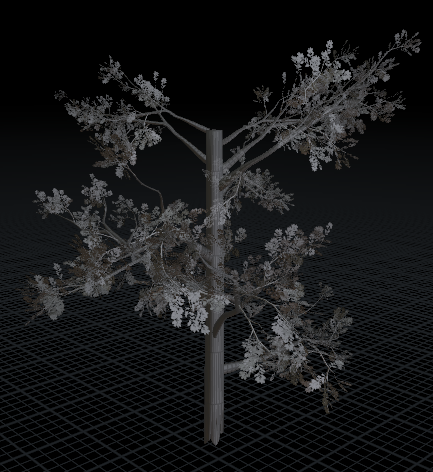

Hello! I'll try to explain it quickly, I have created a tree, with trunk and leafs, and created a packed USD. I am using solaris/karma to render lots of instanced copy of the tree, and it works, but...sometimes the trees lose their leafs for some reason. The odd thing is, if go inside the instancer node (inside lops) and mess around with the "method" parameters, sometimes the leafs comes back, sometimes they disappear again (selecting method that didn't worked before), like a russian roulette basically... It's very odd and it feels like it's a bug, or am I missing something?

-

Hello! I'm quite new to Houdini, and I'm trying to create an environment. The biggest problem is performance, since it constantly fills all my ram (64gb) When I scatter the elements with low poly and low density, the scene kinda works, but if I add higher version of the objects, it just freezes. I was reading I should be using packed geometries or instances, but I can't find the workflow to use with Karma/Solaris. Inside the Lopnet I use a copy to point to create the copies of the trees/rocks. Many thanks!

-

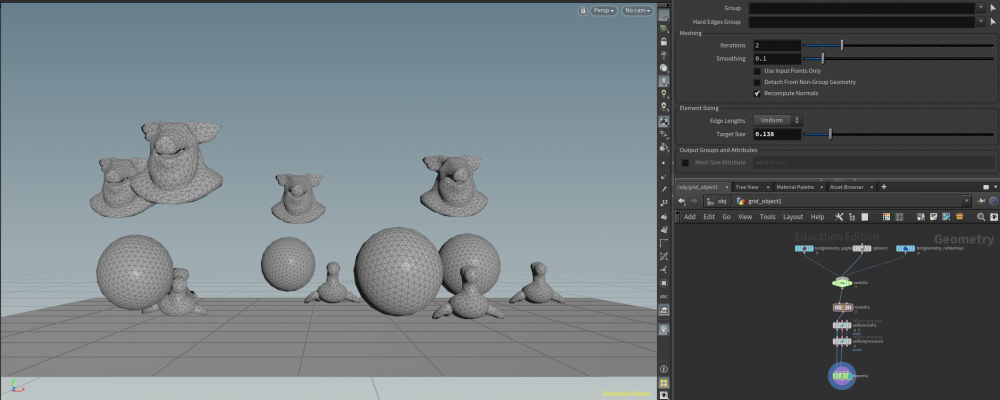

With the help of both the Redshift community and resources here, I finally figured out the proper workflow for dealing with Redshift proxies in Houdini. Quick summary: Out of the box, Mantra does a fantastic job automagically dealing with instanced packed primitives, carrying all the wonderful Houdini efficiencies right into the render. If you use the same workflow with Redshift, though, RS unpacks all of the primitives, consumes all your VRAM, blows out of core, devours your CPU RAM, and causes a star in nearby galaxy to supernova, annihilating several inhabited planets in the process. Okay, maybe not that last one, but you can't prove me wrong so it stays. The trick is to use RS proxies instead of Houdini instances that are in turn driven by the Houdini instances. A lot of this was based on Michael Buckley's post. I wanted to share an annotated file with some additional tweaks to make it easier for others to get up to speed quickly with RS proxies. Trust me; it's absolutely worth it. The speed compared to Mantra is just crazy. A few notes: Keep the workflow procedural by flagging Compute Number of Points in the Points Generate SOP instead of hard-coding a number Use paths that reference the Houdini $HIP and/or $JOB variables. For some reason the RS proxy calls fail if absolute paths are used Do not use the SOP Instance node in Houdini; instead use the instancefile attribute in a wrangle. This was confusing as it doesn’t match the typical Houdini workflow for instancing. There are a lot of posts on RS proxies that mention you always need to set the proxy geo at the world origin before caching them. That was not the case here, but I left the bypassed transform nodes in the network in case your mileage varies The newest version of Redshift for Houdini has a Instance SOP Level Packed Primitives flag on the OBJ node under the Instancing tab. This is designed to basically automatically do the same thing that Mantra does. It works for some scenarios but not all; it didn't work for this simple wall fracturing example. You might want to take that option for a spin before trying this workflow. If anyone just needs the Attribute Wrangle VEX code to copy, here it is: v@pivot = primintrinsic(1, “pivot”, @ptnum); 3@transform = primintrinsic(1, “transform”, @ptnum); s@name = point(1, “name_orig”, @ptnum); v@pos = point(1, “P”, @ptnum); v@v = point(1, “v”, @ptnum); Hope someone finds this useful. -- mC Proxy_Example_Final.hiplc

- 13 replies

-

- 10

-

-

-

- instancing

- proxies

-

(and 3 more)

Tagged with:

-

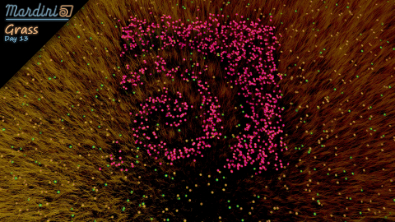

Free video tutorial can be watched at any of these websites: Fendra Fx Vimeo Side Fx Project file can be purchased at Gumroad here: https://gumroad.com/davidtorno?sort=newest

-

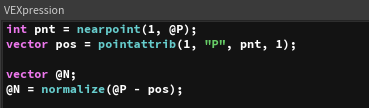

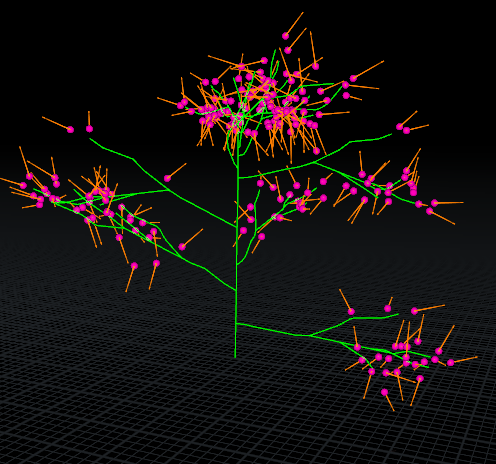

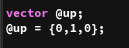

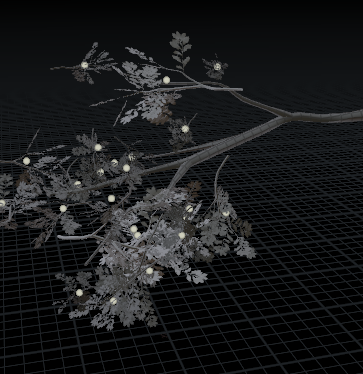

Hello all, I have a built a rig that allows for the dynamic simulation of trees. I was able to define an instance point which is offset from the trees branches. This enables the copying of a packed leaf primitive onto the point. The next step is to update the rotation of the packed primitive. It seems like the copy to points has defaulted to using the z-axis (vector pointing in the {0,0,1} direction). The tree includes a central wire which captures the deformation as it moves through space. I have been able to construct a normal by using the following logic: Using a leaf point find the closest point of the wire compare point positions (@P{leaf point} - @P{wire point}) normalizing the compared vector and setting an @N attribute VEX CODE However, when using this @N (normal) on the copy to point the leaf geometry picks up this @N (built above in vex) and discards the desired z-axis vector of {0,0,1}. Here is a diagram demonstrating the results. Figure 1 - This is the result of the vex code written above (visualized left), notice when using the vex code normal the leaf is unable to correctly orient itself to the point (visualized right). The custom vex code normal is required to guide the orientation and the tree deforms. This is shown in the following .gif animation. Figure 2 - A .gif animation demonstrating the updating normal as the tree deforms I have also tried adding an @up vector to see if that resolves the issue, however, that route is also producing me undesirable results. I have though about using some form of quaternion or rotational matrix, however, I am currently studying these linear algebra concepts and I need time and guidance before fully understanding how to apply the knowledge in Houdini. Could someone please offer guidance in this regard. I am working on supplementary .hip file which should be posted shortly. Warm regards, Kimber

- 4 replies

-

- transform

- vector spaces

-

(and 3 more)

Tagged with:

-

Hi everyone. I'm trying to create a setup that instances a few models randomly as vellum soft bodies. I want them to spawn four at a time, every twenty or so frames. I'm almost there I think- however my current solution involves using a switch that picks a random model based on time, so I always end up getting sets of four of the same model for every round of instancing. I feel like the solution should be relatively simple, but I don't mind changing my approach significantly if it's necessary. I've attached a .hip file and an image. As you can see in the picture, there are very obvious groups of objects that formed at the same time: VellumInstancing.hipnc

- 4 replies

-

- vellum

- instancing

-

(and 2 more)

Tagged with:

-

Hi all, First of all, please let me know if somebody else asked this question and got an answer, I looked for an answer here and didn't find any, someone has asked on SideFX forum but nobody answered. anyway, I have a scene with hundreds of thousands of points in it that I wanna render as instances. It works fine when I render with Mantra, the @instancepath attrib works just fine, and it does work with Redshift too but it's very slow and it just freezes when the number of points goes up, even 1000 points would take forever to render with RS. So I was wondering if I'm missing something. Thank you, E

-

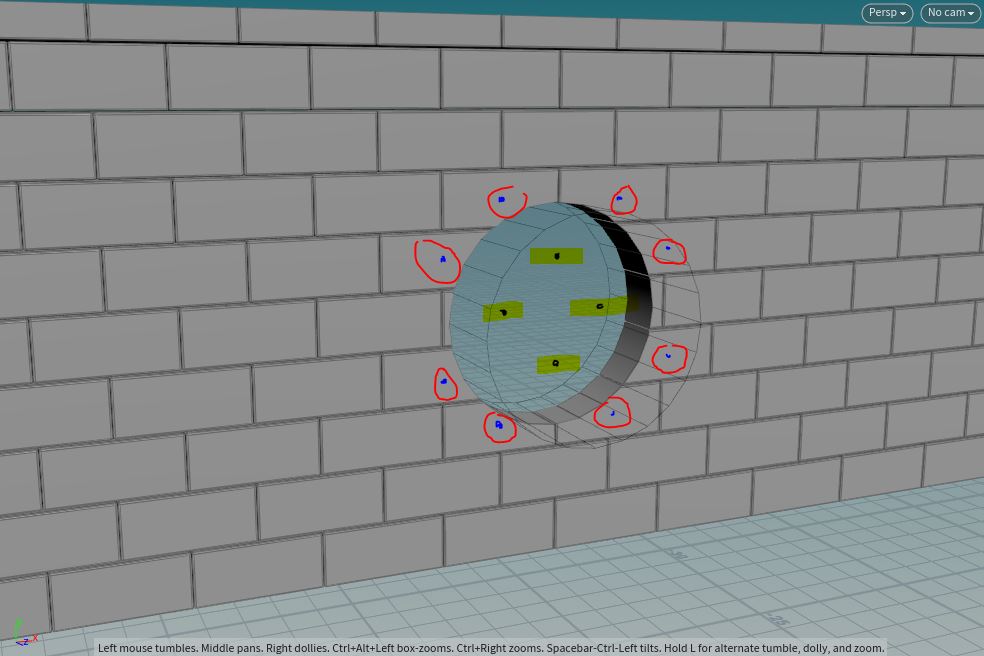

I'm having trouble figuring out the best way to achieve something. I'm creating a wall of bricks. Each brick will later be replaced by a far more detailed brick. I'm trying to keep my scene as light and memory efficient as possible so I'm using copy to points and pack and instance is checked. However, I need to cut a hole into this wall. In my example it is a tube, but I'd like to figure out how to do this with any shape. What I want to do is figure out how to do one of two things. 1. Prior to copy to points create a group of the points which will later have bricks that need unpacked in order to boolean. 2. After copy to points with pack and instance create a group of packed bricks that will need to be unpacked in order to do a boolean. If you look at the image I've provided you'll see some points are outside of the tube shape, but would still need to be part of the group that gets/remains unpacked. At the same time some are mostly or completely inside the tube and would also need to be unpacked. I know I can just use a peak node on my tube to make it wider just for selecting, but I'm wondering if there is a more elegant way of doing this. I was thinking of something like intersection analysis, but that isn't the right thing. Is there a way to determine if a packed/instanced brick is partially inside the tube geo? Thanks, Tim J

- 2 replies

-

- instancing

- packed

-

(and 1 more)

Tagged with:

-

I would like to input Maya geometry into the HDA, which then will do it's magic, like placing that 1 geo 100 times, and then instance it when baked.

- 1 reply

-

- instance

- instancing

-

(and 3 more)

Tagged with:

-

Hi, i'am trying to instance lights on custom points that were created from geometry, but render doesn't start at all or rendering and loading a lot slower than standard primitive geometry and ram usage incredible high. If anyone can help, I will be very grateful Custom points https://prnt.sc/sz0fcl Grid primitive https://prnt.sc/sz0grm inctance_problem.rar Problem is solved, pls delete this topic

-

- instancing

- lighting

-

(and 2 more)

Tagged with:

-

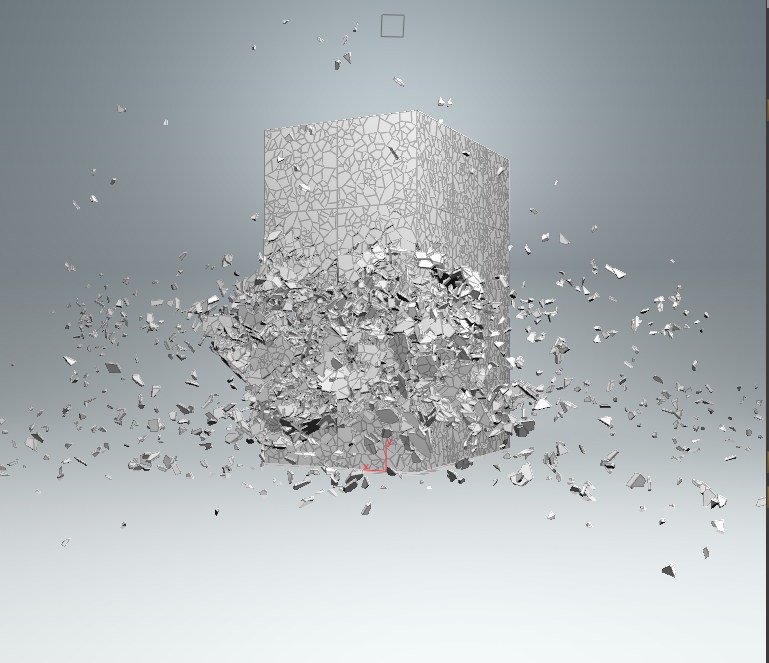

Hello, I recently went through Steven Knipping's tutorial on Rigid Bodies (Volume 2). And now I have a nice scene with a building blowing up. The simulation runs off low-res geometry and then Knipping uses instancing to replace these parts with high-res geometry that has detailing in the break-areas. The low-res geometry has about 500K points as packed disk primitives so it runs pretty fast in the editor. Also, it gets ported to Redshift in a reasonable amount of time (~10 seconds). However, when I switch to the high-res geometry, as you might guess, the 10 seconds turn into around 4 minutes, with a crash rate of about 30% due to memory. When I unpacked it I think it was 40M points, which I can understand are slow to write/read in the Houdini-Redshift bridge, but is there no way to make Redshift use the instancing and packed disk primitives? My theory kind of is that RS unpacks all of that and that's why it takes forever, because when I unpack it beforehand, it works somewhat faster - at least for the low-res. The high-res just crashes. I probably don't understand how Redshift works, and have wrong expectations. It would be nice if someone could give me an explanation. Attached you'll find an archive with the scene with one frame (Frame 18) of the simulation included as well as the folder for the saved instances of low- and high-res geometry. Thanks a lot for your help, Martin (Here's the pic of the low-res geometry, the high-res is basically every piece bricked down into ~80 times more polygons.) Martin_RBDdestr_RSproblem_archive.zip

- 16 replies

-

- instancing

- millions of points

-

(and 2 more)

Tagged with:

-

Hi forum! Seeking out some help here, cos I can't get my head around generating vellum geometry. I am working on a scene where I need to create close-up splashing water droplets on a static surface. Typically this would probably be a FLIP task but it seems like overkill and I am only after a couple of droplets, so the idea is to make rain and splashes with particles, copy some spheres on them and feed them to vellum to get some nice softbodies, then VDB them. My problem is I don't understand how I can "emit" on my source points that come to existence arbitrarily (ie. not on frame 1) and differentiate the newly created vellum patches. I've looked around, watched the masterclasses, I understand it has to be somehow parallel to constraint creation, maintain point count/id etc, but I cant figure out the solution. Its gonna be a single splash so continuous emission (as in a particle emitter per se) doesn't work for me. Any pointers are appreciated, check the hip for a rough sketch of the problem! Thanks in advance! od_vellum_splash.hip

-

Hello, I am currently working on an HDA, which allows me to place different objects on points using the "unreal_instance"-Attribute. Meshes and Particle Systems are placed at the right place. The problem starts with BP-Actors, they are placed without the world offset but rather at the 0/0/0 world position. Any ideas on how to fix this issue ? kind regards, Aiden Edit: it looks like it has an offset on the local X axis of around 1000 units.

-

- houdini engine

- unreal attribute

-

(and 3 more)

Tagged with:

-

Hello everyone! I am trying to do a simple thing but it seems like I am missing something. I want something similar to this: But instead of controlling it by pscale, I would like to take the bounds of my instancing objects and make them get away from each other if the bounds of the instanced object is bigger than the distance between the points, or maybe deleting them as well. I am trying to do this just to scatter some crowds in a field, is there is an easier way to do that, it would be nice to know as well as I am quite new to crowds. I am attaching a simple example file of what I am trying to do. Crowd_RND_01.hip

- 6 replies

-

- instancing

- copy to points

-

(and 2 more)

Tagged with:

-

Hello! I'm starting a twitch dot television channel and will be streaming Houdini training content. I've worked at several large VFX and advertising studios as well as taught Houdini classes at Academy of Art university. I'm hoping I can reach a larger audience through Twitch as well as the idea that people viewing the stream can participate by asking questions and providing feedback in real time. This is my channel: https://www.twitch.tv/johnkunz My first stream will be starting on Sunday (Jan 26th) @ 1pm PST. If you follow my channel (it's free ), you'll get an email notification whenever I start a stream. I'll be going over this project I recently finished https://www.behance.net/gallery/90705071/Geometric-Landscapes showing how I built things (VOPs, packed prims, Redshift render) and why I set things up the way I did. Some of the images I made are shown below. Please come by this Sunday with any questions or ideas you might have!

-

Hi all! There's 1 week left to register for my Mastering Destruction 8-week class on CGMA! In supplemental videos and live sessions we'll be focusing on some of the awesome new features in Houdini 18, and exploring anything else you might be interested in, such as vehicle destruction. For those that haven't seen me post before, I'm an FX Lead at DNEG, and previously worked at ILM / Blue Sky / Tippett. Destruction is my specialty, with some highlights including Pacific Rim 2, Transformers, Jurassic World, and Marvel projects. I've also done presentations for SideFX at FMX/SIGGRAPH/etc, which are available on their Vimeo page. If you have any questions, feel free to ask or drop me a message! https://www.cgmasteracademy.com/courses/25-mastering-destruction-in-houdini https://vimeo.com/keithkamholz/cgma-destruction

-

- 1

-

-

- destruction

- rbd

- (and 18 more)

-

Hello, I have a question but I haven't found away to solve it , I want to instance soft bodies to a particle system that is constantly generating particles (constantly changing the numer of particles). That could be with FEM , grains or vellum you name it. which would be the best approach to do that, so far I faked the effect with the analytic foam tutorial on Entagma, but yet it is missing a lot of the motion I am looking for. Thank you for your help!

- 1 reply

-

- houdini

- soft bodies

-

(and 3 more)

Tagged with:

-

I'm trying to instance simple sharp rectangular geo onto scattered points to create a frost effect. I've tried 3 methods: - The copy to points sop (w/ pack and instance) - Adding the s@instance attrib with a path to the geo - Using the instance node at obj level When rendering with around 1000 points I get a decent preview quickly in the IPR but a full render at 256 samples still takes far too long. If I try to up the point count to something like 50k, I struggle to get any feedback from the IPR. If I go to 100k I get no feedback in the IPR, it just freezes. When I try to render to disk, the render doesn't even begin. What am I doing wrong? From what I've seen I should be able to achieve 100k instances fairly easily if I'm not mistaken. I appreciate any help! Specs: i7-6700k, GTX 1080, 16GB RAM

- 1 reply

-

- issue

- instancing

-

(and 1 more)

Tagged with:

-

Hi guys, This is noob question but I just can't figure it out. I have a pyro cache in the scene. All I want to do is to intance that obj node and transform a bit and scale it up. I've tried using Instance object but it seems I can't rotate or move it. Can anyone help? Thanks! shawn

-

- pyro

- instancing

-

(and 1 more)

Tagged with:

-

Hello everyone, The primary aim of this training is to provide a project that will take the viewer through as much of Houdini as possible. Letting them utilize all the various tools that Houdini provides for modeling and VFX to generate a detailed environment. Currently the training is available for redshift and octane. Next week, I'll be recording the mantra version. There is a 25% introductory discount in the entire training till 18th may 2019 Click on the link given below for more information http://www.rohandalvi.net/rocketbus

-

- 2

-

-

- rendering

- instancing

-

(and 6 more)

Tagged with:

-

Hi, I was just trying to replace my pieces with hi quality instancing but it gives different geometry in Rigid body Simulation? Could you please guide me where did I miss MANY THANKS PREM wall rbd sim v7.hipnc

-

- dynamics

- instancing

-

(and 1 more)

Tagged with:

-

If you're interested, be sure to check out the course right here: http://bit.ly/2tEhWFN L-systems offer a great way of procedurally modeling geometry, and with these skills, you'll be able to create a wide variety of complex objects. If you've ever wanted to create foliage, complex fractal geometry, or spline-based models from scratch, then L-systems offers you a procedural way of doing so. Unlike many other tutorials, this course aims to address the topic in an easy-to-follow, technical, and artistic way. In addition to L-systems, you'll learn more about instancing and how to work efficiently with Houdini. We'll be rendering out millions of polygons with both Mantra and Redshift while aiming to optimize render times as much as possible. These optimizations are applicable across a wide variety of 3D topics. Large scale environment creation, destruction, crowds, and real-time render engines are all examples of other 3D topics which will benefit from this course. Thank you for watching!

-

- 3

-

-

- redshift instance

- redshift proxy

- (and 10 more)

-

Hi, I'm trying to assign a material to some instances and I'd like for the opacity to be driven by a set the instanceObject uvs (uv) and the basecolor to be driven by the instanceTemplate uvs (uvgral). Is this possible? What I'm trying is to call uvgral in the material but it's not working. Overrides I do at SOP level don't work (naturally) and my attempt to make an override at object level was unsuccessful. Thanks!

.thumb.png.41b4aac82a599e65b5b7857cb698d91a.png)

.thumb.png.bc8d3bc9a357e9eca9dffee2b6bcc182.png)