Search the Community

Showing results for tags 'redshift'.

-

Hi Guys. I downloaded this FBX file a while back and it's been great. It's a Mark VI ship that's textured. I brought it into Houdini and mantra reads it well. The only problem is I'm using Redshift for the scene with water and I'm not sure how to get redshift to read the shaders or how to connect them. I can't seem to find any useful tutorials on this either. A couple years ago Grand Master Atom was amazingly helpful with a script that he wrote for redshift to read downloaded shaders but I can't get that to work anymore. I think the recent updates over the years broke that script or something. Anyone know how to connect so redshift reads the shaders? Pierre Mark_VI_Patrol_Boat_Dirty.hiplc

-

Hello people! I'm currently trying to render pyro exoplosion with the motion blur in Redshift and I didn't succeed with any of my aproaches. I tried Convert to VDB , and then Merge Volume in Velocity mode with the motion blur ON but nothing works yet. I found few HIP examples but they neather don't work. Is there anybody who ever did this? Too bad there's nothing about it in the Redshift official documentation too. Please help

-

I'm trying to get hqueue with houdini 20 and redshift to run and I keep getting this error when I submit my testscene: Any ideas on how to fix that? Or what the problem could be? File "<stdin>", line 21, in call File "c:\hqueueserver\python27\lib\site-packages\pylons-0.9.7rc4-py2.7.egg\pylons\controllers\core.py", line 204, in call response = self._dispatch_call() File "c:\hqueueserver\python27\lib\site-packages\pylons-0.9.7rc4-py2.7.egg\pylons\controllers\core.py", line 159, in _dispatch_call response = self._inspect_call(func) File "c:\hqueueserver\python27\lib\site-packages\pylons-0.9.7rc4-py2.7.egg\pylons\controllers\core.py", line 95, in _inspect_call result = self._perform_call(func, args) File "c:\hqueueserver\python27\lib\site-packages\pylons-0.9.7rc4-py2.7.egg\pylons\controllers\core.py", line 58, in _perform_call return func(**args) File "<string>", line 2, in deleteNetworks File "c:\hqueueserver\python27\lib\site-packages\pylons-0.9.7rc4-py2.7.egg\pylons\decorators\rest.py", line 33, in check_methods return func(*args, **kwargs) File "<stdin>", line 60, in deleteNetworks ValueError: invalid literal for int() with base 10: 'new_1'

-

Hi, I have a weird problem with Redshift in Houdini. I looked for solutions searching on Google but couldn't find it. I read Redshift documentation with Houdini plug-in and couldn't find it. If there is anyone who'd know what this problem is and know a solution for it, please help me out. So I created this simple scene with a text and a simple glass texture. I lit it and everything looked fine on Redshift RenderView (See attachment "Capture 1") I even enabled PostFX using Bloom. Color management is set to display "ACES" and Red.709 as "View" However, once I hit "Render To Disk", the MPlay window shows something way different even though it's set as "ACES" and "Red.709" (See attachment "Capture 2") It seems to have a different hue with higher vibrancy. The bloom is affecting the scene a lot more. Once I brought in the rendered exr sequence in After Effects, it looks a lot different than what I saw on MPlay -- which is supposed to be the same file. (See attachment "Capture 3") Not to mention, added "Bloom" effect is nowhere to be seen. Any Redshift experts out there who can help me out? Thank you.

- 6 replies

-

- renderview

- render to disk

-

(and 2 more)

Tagged with:

-

Hi guys i need help in Fisheye rendering of geometry while using redshift Render or mantra in houdini . Any idea how to do that. Thanks Rahul

-

Hi Houdini users, I am working on a new system build and wanted your thoughts about using a gaming card(4090) vs a workstation card (RTX a5500)? Main motivator behind this is wattage and thermals. Power consumption is not the concern, but raising the temp in the room by using a gaming card. Plus its very hard to get a 4090 that isn't drastically marked up to where I might as well get a workstation card for the same price. I am eyeing a AMD 7950x(CPU), but am waiting to see the reviews of the new 7000x3D chips coming in the next few weeks. Main reasons for the machine: Motion graphics and future 3D/Houdini work(hopefully). 3D side will be Houdini/redshift Any advice/input is welcome. Thank you for your input

-

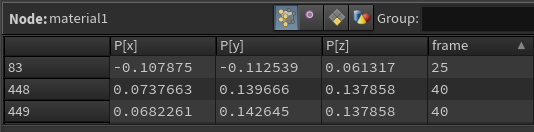

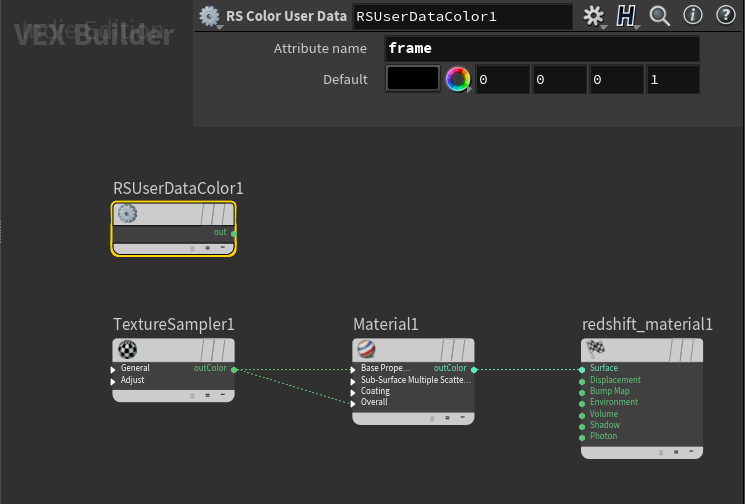

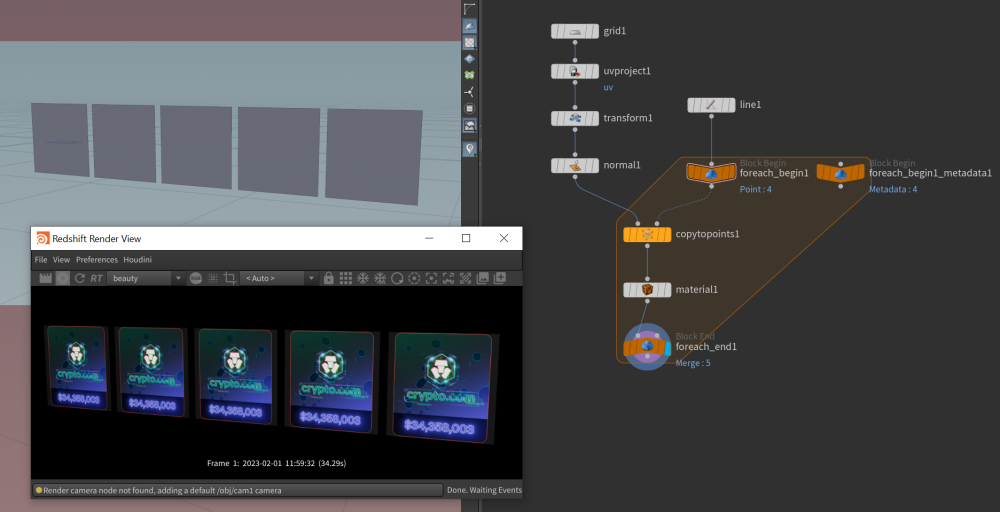

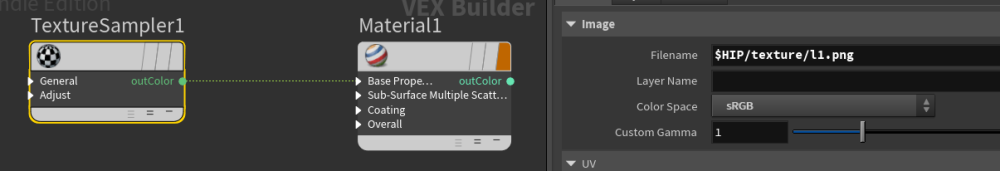

Hello, I have multiple planes in the scene and I want whem to have same material, but different textures. There will be like 20 of them (named l1.png, l2.png, ...), so I would like to assign texture somehow using dynamic texture name. I tried using iteration number in material network, but it doesn't work - it uses only the last number. Added project file with textures. Thank You! example.zip

-

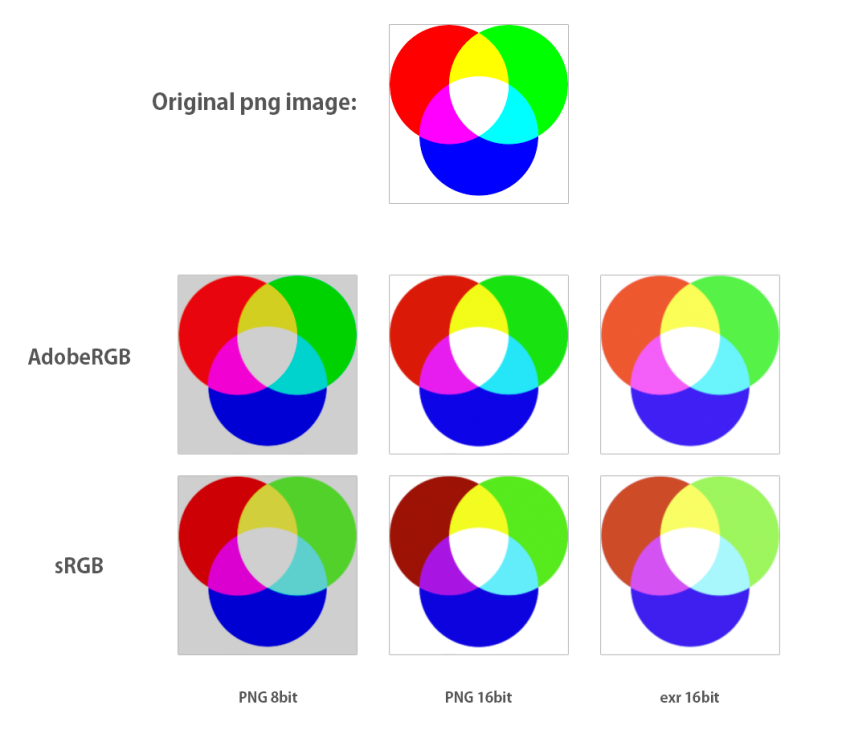

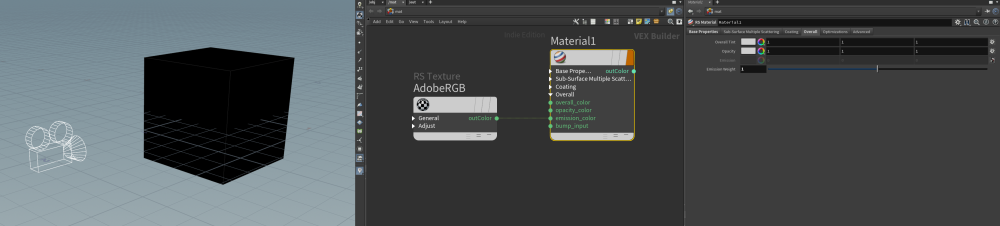

I want to receive same output as input of my texture image. I tried several options saving different formats, or changing texture color space. But as you can see in attatched 'results.png' file, colors doesn't match. A scene has a camera facing a box. Material has only a texture set to emission channel, diffuse and reflection weights are set to 0. The closest result would be AdobeRGB PNG16bit, but still greens and reds doesnt look the same. Why is that and how to achieve same output image as input? rgb_test.hiplc

-

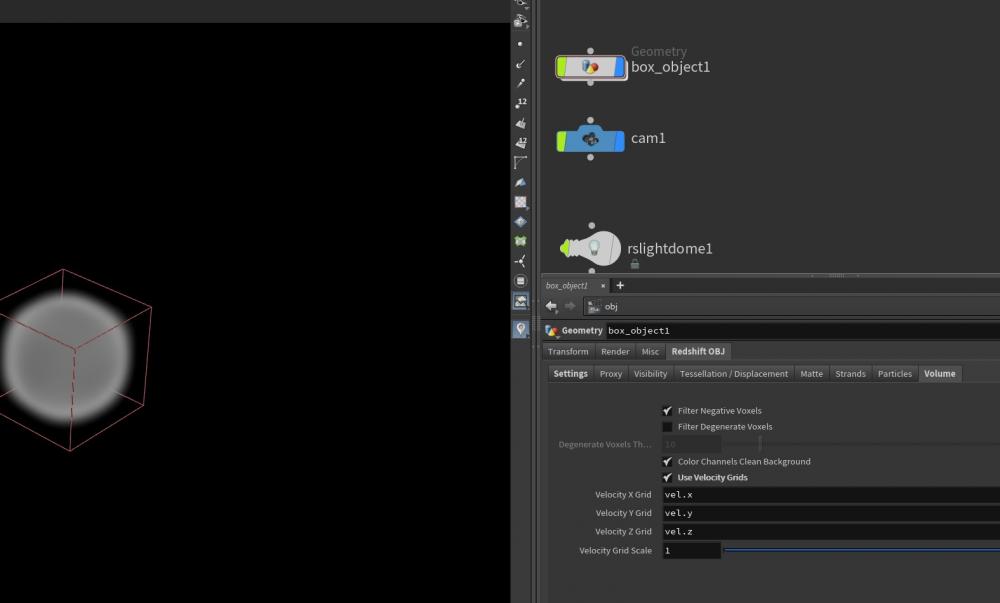

Hi guys, I tried to apply motion blur to a fast-moving volume in my scene, but it doesn't render the motion blur! How can I fix it? Thanks for helping. Redshift_Volume Motion Blur_01.hip

-

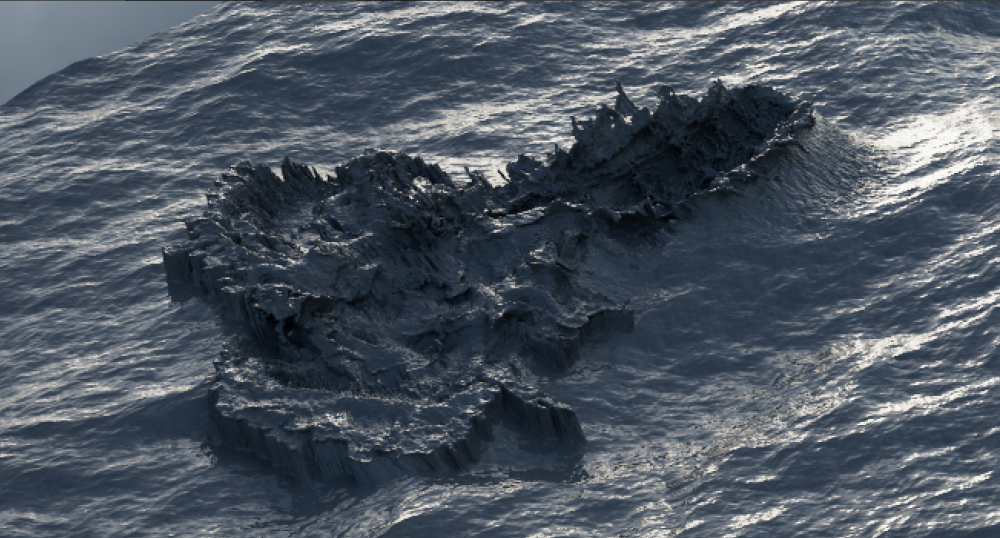

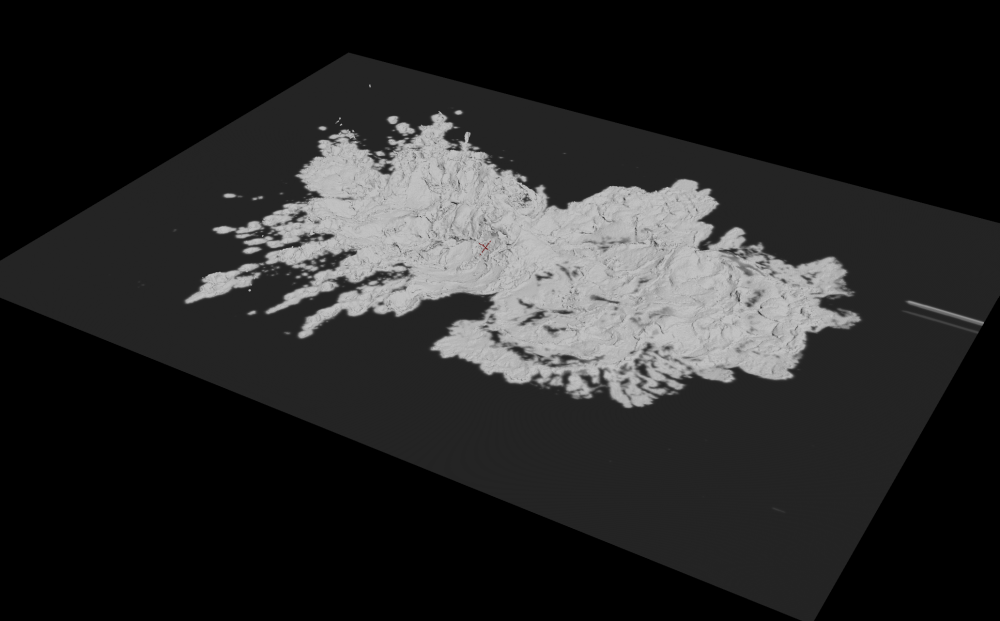

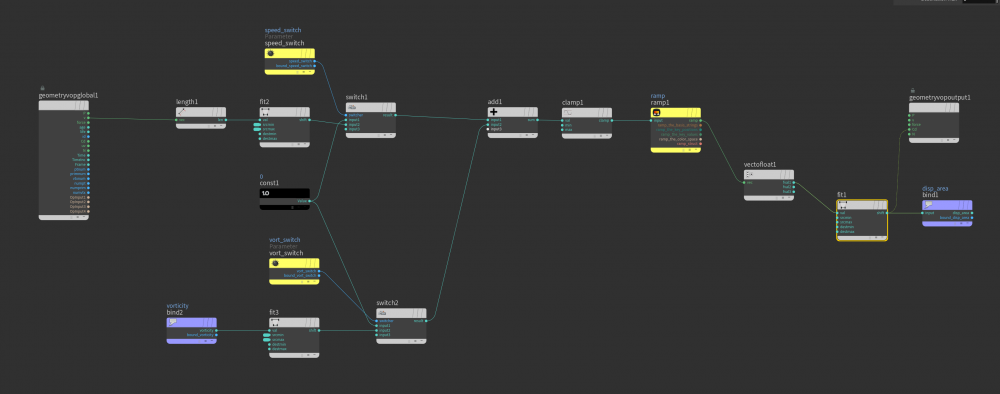

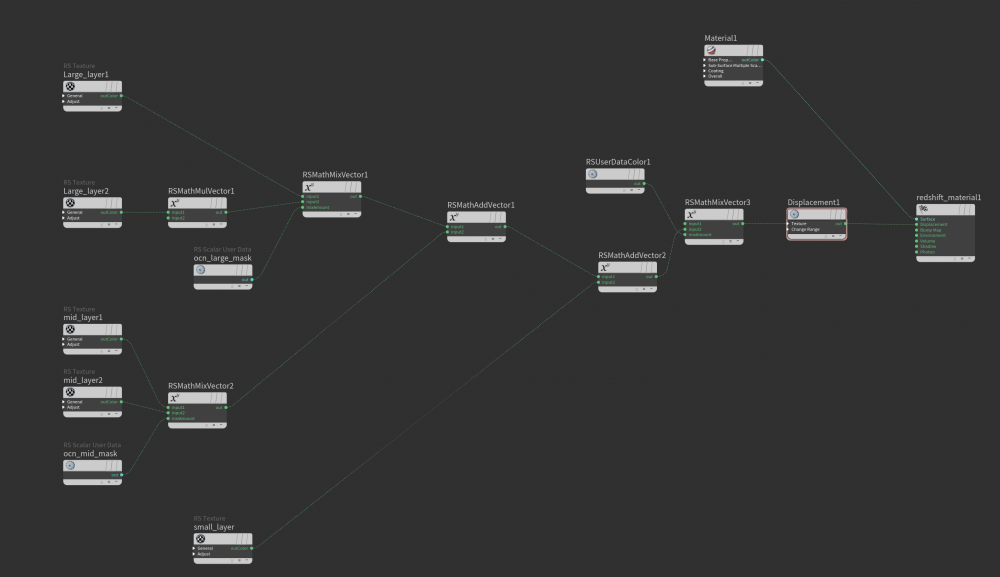

Hi! I used displacement mask for redshift in Houdini.But there was such a problem.I don't want displacement where I mask and blend into the ocean smoothly. You can see the problem on the edges of the mesh in the first photo.How can i fix this? I shared the displacement mask and material setups below.I will be glad if you help.Thanks in advance.

-

- displacement

- mask

-

(and 3 more)

Tagged with:

-

[ PAID GIG / PER PROJECT ] ROHTAU STUDIO We are a fully independent studio of like-minded artists doing high-end work for advertising, installations, and special projects and soon will be also doing TV and Film work. Our environment is a friendly one with top-notch infrastructure and a phenomenal artist-friendly pipeline. We work exclusively on the cloud so we can hire great people, our team is fantastic so please DM me or email me directly to chat, we have a few things lining up and will certainly need help. Job Position: Houdini Generalist / Houdini Lighting TD Render engine: Redshift Location: Remote Description: 30sec commercial, full CGI hyper-realistic look. Start: ASAP Duration: Minimum of 6 weeks, probably a lot more. Contact: jordi@rohtau.com Don't be shy, we want to know from you.

-

- 2

-

-

- cgi

- commercial

- (and 5 more)

-

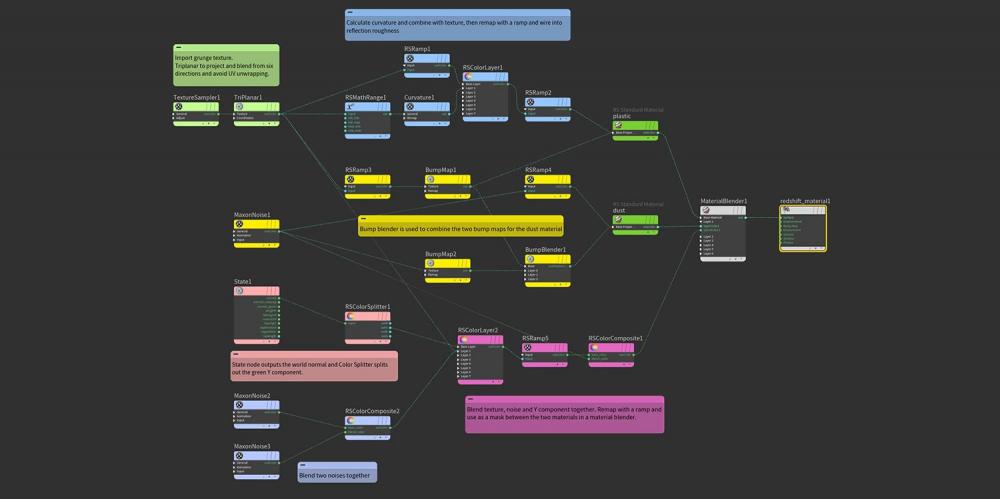

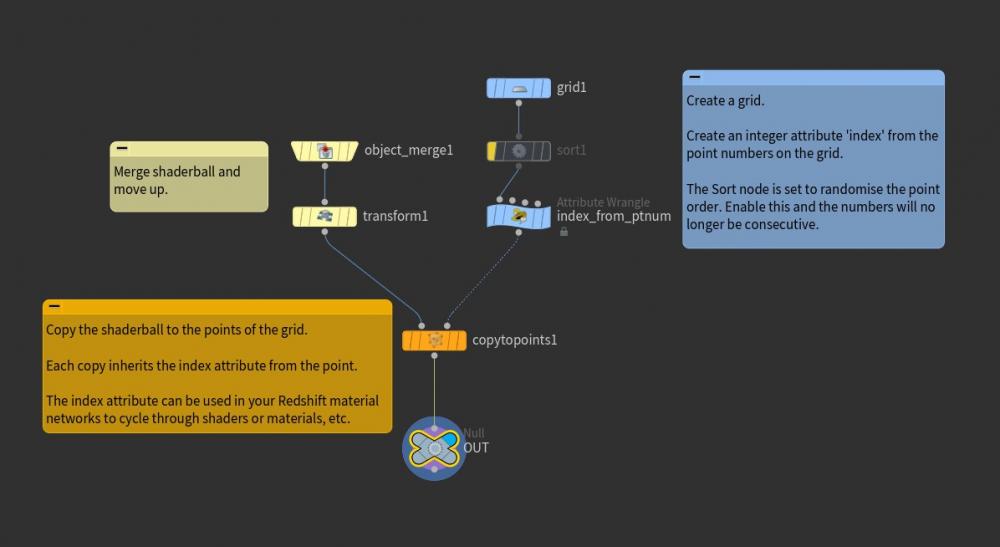

Redshift Essential Materials is set of Houdini presets which have been built as an introduction to the most useful Redshift parameters, features and nodes. Redshift Essential Materials for Houdini A collection of 70 pre-build setups for Houdini which demonstrate Redshift’s most important and useful nodes. Each material includes remarks in the network to explain the nodes. This library is a great way to learn how particular nodes work in combination with objects in your scene, as many of these examples require the use of the Redshift options available on your geo nodes. Product Summary 70 Redshift materials with notes Houdini project files for each setup Dispersion, Absorption and SSS effects Wear & tear, dust & surface techniques UV projection, normals, camera, object & world space Using attributes in materials Render points, splines, particles & hair Create wireframes and sprites Render Volumes & OpenVDB files Example scenes for Utility AOVs Recommended requirements Houdini 19.5 Redshift 3.5.06 and above

-

Title is a bit misleading.. I realise Pyro volume simulations don't really have a birth and death point, but what I'm hoping to achieve is to be able to colour or alter the transparency of my fire closer to the base or emission point of the fire. Currently my fire is looking really nice, apart from the base of the fire where it first emits from. I'd like to make this area more transparent. I'm using the Redshift volume shader, but unfortunately cannot post a .hip file due to work non disclosure stuff. I can create a simplified version of the scene if need be.

-

hey, we're working on a project where I cache a proxy of geo, do my pops sims, and call the proxy from disk at rendering time using the "instance file" attribute per point. works great. However, the whole scene needs to be Archived to a redshift "Scene Proxy" and sent over to another artist who has different named drives. it works great when rendering from my end, but when the other artist using Maya imports the "Scene proxy" which calls the "Geo proxy" from Drive and it fails to load because it isn't the same path. in the Docs, it mentions using the REDSHIFT_PATHOVERRIDE_FILE can be used. I was hoping it would be like in obj level the override user data tick box to override that proxy path, like the "Material Override list". But it isn't the case. the Doc states So, for example, if the environment variable is set to REDSHIFT_PATHOVERRIDE_FILE = C:\mypathoverrides.txt ... the file mypathoverrides.txt could contain the following, which helps to map from a local folder to some shared network location "C:\MyTextures" "\MYSERVER01\Textures" "C:\MyVDBs" "\MYSERVER01\VDBs" How do I Implement it in Houdini and in Maya? thanks

-

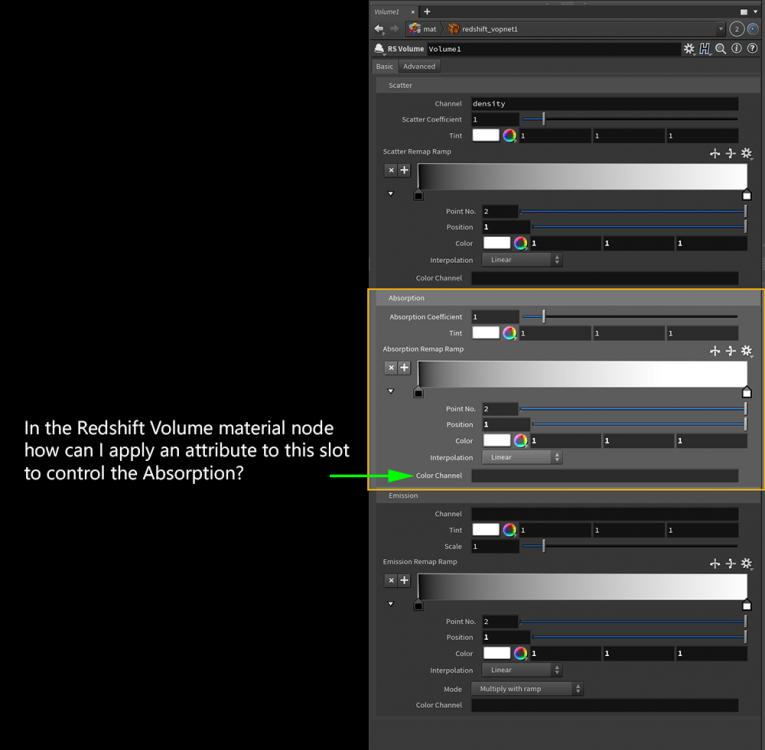

Hi guys, I would like to use the color channel of the Redshift's material volume node. 1) I created a vector point attribute called "absorption". 2) I added this attribute's name to the "Volume Rasterize Attribute", attributes list, to create a volume called "absorption" 3) In the "volume source" node of my pyro DOP, I added "absorption" as the source and target field (as vector type). 4) I add a "VDB vector merge" node before my pyro material node. 5) In the RS Volume material, I add "absorption name into the color channel. But it doesn't work..! How can I fix it? Thanks for helping. Absorption_01.hip

- 6 replies

-

- redshift

- color channel

-

(and 1 more)

Tagged with:

-

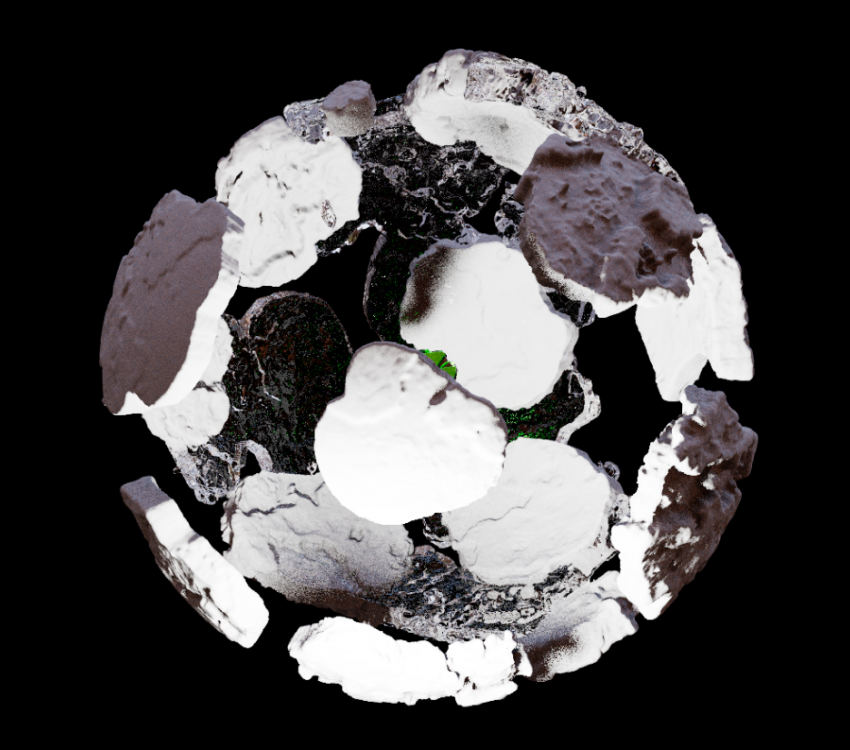

Hi guys, I'd like to share my latest personal r&d project of creating procedural assets. Still work in progress. Cheers, Shawn

- 1 reply

-

- 10

-

-

-

- procedural

- assets

- (and 4 more)

-

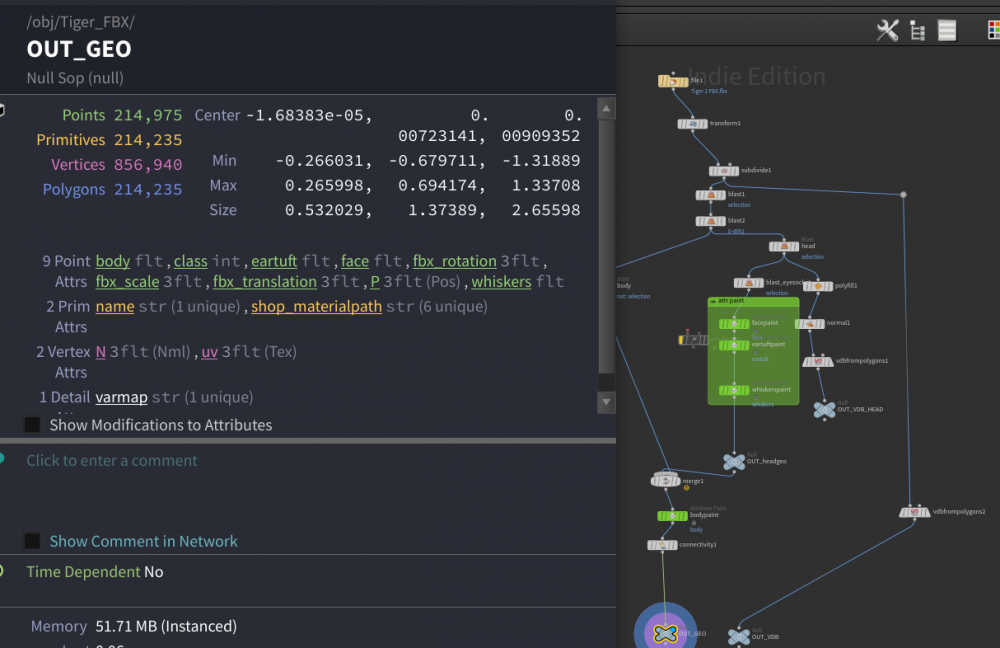

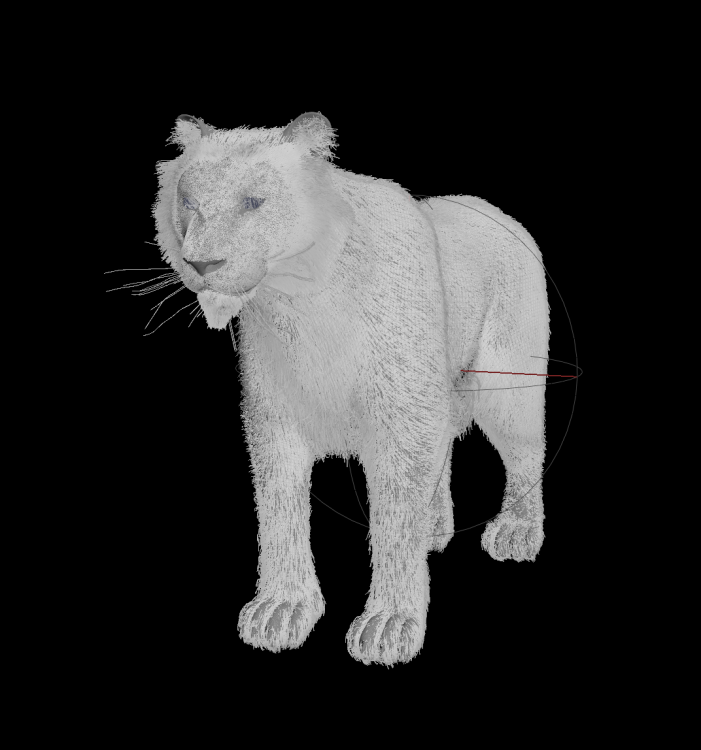

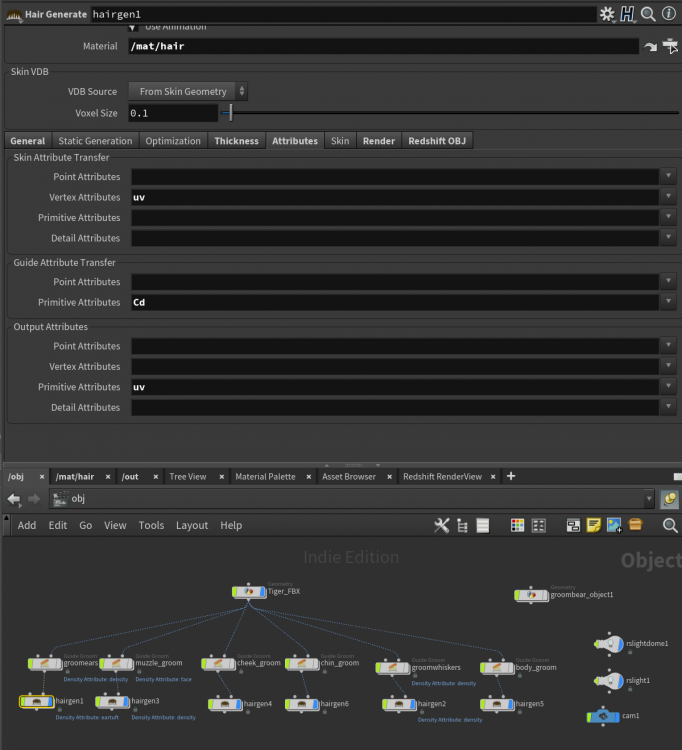

I'm trying to apply this tiger's UV'd texture to fur/hair. I'm going round in circles and sure i'm being stupid, grooming is new to me. Any pointers appreciated:) Rendering w redshift.

-

When rendering in Redshift, in the rop under the AOV section you can select cryptomatte as a custom output. There are four ID Type options you can choose from: Node Name Material Name Object ID Custom Name ID Does anybody know how to make option #4 work? It seems like there's a very powerful component to the cryptomatte functionality that nobody is using, unless i'm mistaken. There's no better program than Houdini at making carefully placed and named attributes, but what do you do? I tried making a primitive attribute called "Name" but that did nothing. Is this even implemented in Houdini?

- 4 replies

-

- attributes

- cryptomattes

-

(and 1 more)

Tagged with:

-

Hey there, after years I finally decide to study Houdini more seriously. I'm starting again with the Particles course from Applied Houdini, I just finish my first run with the 2nd vol. and I'm really interested in GPU rendering because of rendering time. It's not that I don't like Mantra (actually sounds super interesting to have a native renderer so robust), but for example the last scene I worked, with like 50M particles, took me more than 10 hours to render like 4 seconds at 1280x720. So I would like to take some advices from you: since I'm just a beginner, is it interesting to already try to work with a GPU renderer, like Redshift, so my rendering time will be faster and I'll be able to explore and study more things quickly as well? Or should be better to keep with Mantra and get more knowledge about it? Thank you!

-

Hey guys, I created a simulation in Houdini and wanted to export it to cinema 4D with alembic. It worked completely fine but the Material (Redshift) doesn't stick with the Simulation. As you see in the pictures the half of the simulation gets white and this keeps going until its completely white. I just used the default glass Material and didn't change the geometry. Does anyone know how to fix that?

-

Hi everyone, I'm newbie to Houdini and Redshift... I need your help! I failed to render Cryptomatte with correct object ID, and want to re-render only Cryptomatte without rendering main images to save time. Is it possible or is there any way to skip rendering main images? I just don't want to waste time to render the same images again..... I appreciate your help! JK

-

Hey everyone! I am facing a problem while rendering and I don’t understand how to fix it. I have 50 layers of primitives with same texture, but on rendrs shown only 6 layers?, other disapers. All 50 layers visible only on borders of my primitives. I will be grateful for your help, thanks in advance!

-

Hi all, I'm very new into Houdini, but I'm loving it. There are certain things that they must be quite simple to do but I'm not finding the way. This is importing a png sequence to use into the diffuse channel of a Redshift material. If anybody can help it'd be amazing. Cheers, Sebastian

.thumb.png.a3bb4c1b4eca9560d5bf27857668241e.png)