Search the Community

Showing results for tags 'bake texture'.

-

I’m trying to bake texture using both “bake texture” and “games baker” nodes, a simple Geometry as the LOW RES / Uv object and a hairgen node as the HIGH Res Object, but all my results fail to capture any colors from hair (base color) although the normals seem to be right. How do I properly bake the hair shader to the texture? PS: I already tried to disable lighting.

-

Hi guys. I'm working on a game asset. In short: I would like to find a solution for detecting stacked UVS of multiple same objects, and then group those UVs except the UVs of the first instance. Example: Imagine 20 knobs in an airplane cockpit. They all have the same UVs, and when I use the UV layout, with Stack Identical Islands, all 20 knobs share the same exact UV Island. However, when I then go to bake the textures for this in Substance, Substance will bake 20 times into that same one UV space. So for that reason, I am trying to find a way in which I can detect stacked UVs, and then hopefully also group 19 of them. I will then change the UVs to zero so that Substance doesn't bake the textures into that again. Possible solution (?): In the process of writing this, I found out that the UVLayout node can output a @target prim attrib. Now it seems that this is either 0 or -1 and initially it seemed that it actually does exactly what I needed, it applied 0 to non stacked UVs and -1 to stacked UVs except one. However this does not seem to work always, sometimes the target misses stacked things, and sometimes it applies it to also nonstacked things. Plus, it doesn't apply target=0 to only islands from the first object, but rather it picks UV islands from random instances and then puts 0 on those. I attached a simple example of what I need and what I am getting right now. Hopefully, that will make things clearer. 2 questions: Does anyone know what is the actual "target" and how does it work exactly? If not, can anyone think of any better solution? I've been trying to solve this for years and so far I've been having to group the objects manually. And there are hundreds of objects... Anyway, thanks! Stacking UVs.hipnc

-

Hey guys, I have a question about rendering and baking AOVs with Mantra. From the extra image planes I managed to render out LPE planes such as beauty, direct diffuse, indirect diffuse, direct glossy reflections, and glossy transmission for a hair groom. They turn out great. The problem is when I try to bake those same image planes from the hair groom onto cards. The LPE image planes come out black. If I try render any of the other image planes like root to tip color, hairID, transparency, diffuse unlit color or any other custom passes, those come out great, but all the LPE passes don't render. I suspect this has to do with the absence of a single camera point, so specular reflections, transmission, and other camera dependent BSDF functions don't produce anything. Is there a way around this? Is there a way I can feed a fake camera/eye point in space to the hair shader and then bake that lighting on a card? In a way to create a static fake incidence angle that references a camera I am not actually rendering through? I am also experiencing problems setting up ambient occlusion to be baked from the hair onto cards, a tip on that would be most wellcome. I am using the bake texture ROP. any feedback would be most appreciated, cheers

-

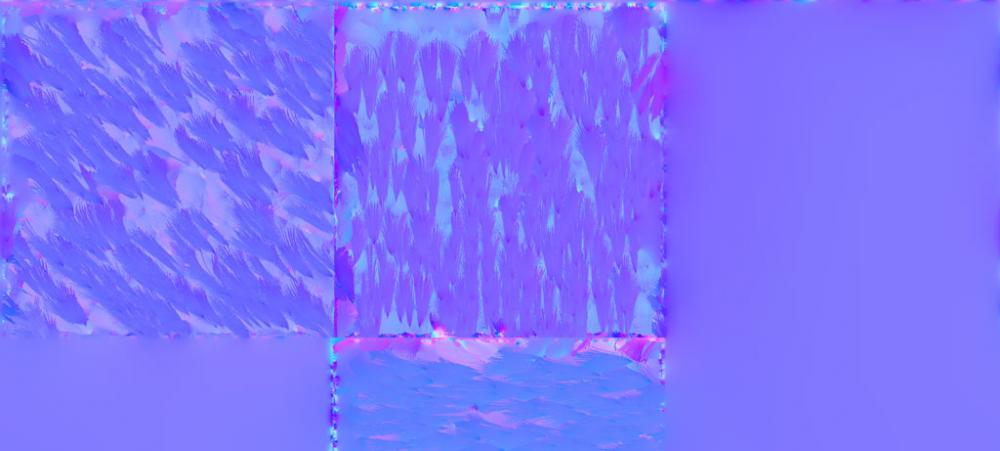

Hi all, I'm attempting to generate a tangent space normal map of a large terrain using Bake Texture ROP. It keeps failing due to geo size so I'm attempting to render tiles. I have two object nodes, one merges low res tiles from main terrain and other merges hi res tiles. I'm using a Wedge ROP to drive X and Y parms on an object which the tile objects reference to change the tile they display. This all works fine, except the Bake Texture ROP doesn't seem to refresh the bounds of the tile objects between Wedge iterations. It renders the tile in the space of the entire terrain. What's even weirder is I added bounds and match size SOPs to the tile to scale it to the entire terrain size, and it still only render tiles in terrain space. Is there some magic I need to do to get Wedge renders to update SOP graphs correctly? Does the last SOP graph node need to reference parms driven by the Wedge node? I've attached an image of the output, as you can see it's correctly rendering the tile, just in the space of the entire terrain. Thanks! G