Search the Community

Showing results for tags 'mocap'.

-

Is it possible to stabilize Mocap data with Kinefx? I have a dirty mocap that floats off the ground place I tried to use Stabize joint to no success, are there any methods to fix this issue... I have watched some tutorials but none really covered this aspect of cleanup... any help would be much appreciated. Thank you.

-

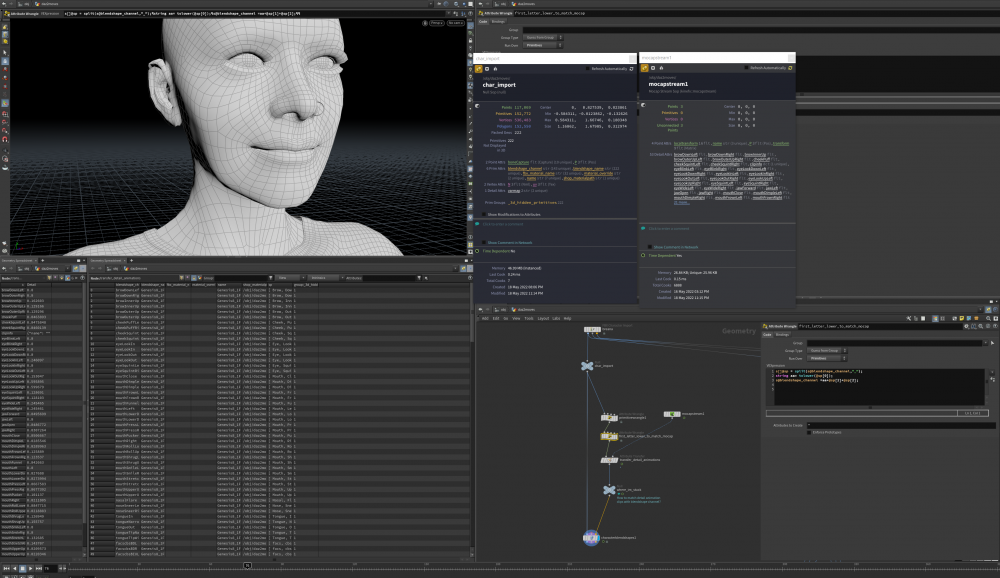

Hey all, I'm trying to match the animated detail attrib from the mocap stream to the blendshapes in the mesh via the characterblendshapes. I've managed some vex to match some of the channel names. Is there some sort of wrangle that would match the detail attrib to the blendshape? Or am I missing a step? Thanks in advance, this is my first time messing with blendshapes in this way.

-

Hi there I have some data coming into Houdini as a .csv as animated points, which is mocap data. I would like to parent bones to the animated points to be able to export as as a fbx. How can I parent bones to this data? (mp4, .hip and csv data attached) thanks bones_animate_02.mp4 bones_animate_02.hipnc mx_allframes_eval.csv my_allframes_eval.csv mz_allframes_eval.csv

-

Hi all, I'm trying to recreate an effect similar to this: but in my case I'd like a simple character with mocap (alembic sequence converted to polygons) being affected by FEM, so in other words the character has to move with the mocap data + has to deform along with FEM. When I add FEM to the animated geometry it applies only to the first frame: is it possible to have the 2 mixed up? Do I need to use Vellum instead of FEM (even if I use Vellum it just applies to the first frame though...)? I've attached the scene I'm starting from and the alembic sequence. Thank you guys, Argo Hip_Hop_Dancing_LOW.abc start.hip

-

For the folks out there looking for a way to do motion retargeting inside houdini, here´s a tease for a tool that has being working for 2 years now! In a couple of weeks I will share more info about how it works, since I don´t have plans to release it, but I will help anyone who wants to do the same - the tool doesn´t have fancy a algorithm, but works very well. https://vimeo.com/299246872

-

Hey all, Apologize in advance for the noobiness, but this must be the right place where to ask. I'm trying to instance more than one Spotlight to a deforming geometry. What I've done so far was scattering points over a mocap and riveting a spotlight on a single point. My question is: Is it possible to procedurally instancing spotlights on every single point, possibly following the normals of the deforming geo? Might sound a tricky question so that's my .hip file attached {{ dnw5.hip }} Much love

-

Still wresting with attribute collisions in layered materials but finding Vellum lots of fun!

-

Hi Spent a few hours trying to apply BVH mocap data (from Truebones) to a rigged mesh inside DAZ Studio. Results have been unacceptable. Too much jitter and erratic motion. Are there any tricks or tips when using BVH data with prerigged meshes?

-

Anyone know of a good source for mocap data, (preferably open source though am willing to pay!). In particular I am looking for dancing, and any sort of martial arts movement. I am already familiar with the Carnegie Mellon database http://mocap.cs.cmu.edu/

-

Hi all, I'm working with some mocap meshes that I'm trailing particles over using the 'minpos' technique in POP VOPs. I'm getting a little stuck though, as when i make trails of the particles, these obviously trail behind the mocap as the keep their birth XYZ coords. What I'd like to achieve is that the trails adhere to the surface of the body/geo in the same way that the particles do, so i end up with something like 0:24 > 0:26 of the attached video. Maybe I need to do something within a sop solver? perhaps dop pop is the completely incorrect way to go? Any hints or tips greatly appreciated - I've attached my .hip here should anyone have the time to take a look! Thanks! (hints on filling the volume as-in the video will also be met with rapturous thanks... I'm not even sure where to start with that one.) surface_particles_trail_test.hip

-

According to this old thread Houdini only accepts mocap data if its Acclaim, Biovision or Motion Analysis. I would like to try using some of the data from CMU http://mocap.cs.cmu.edu I can only see acronymns for tvd, c3d and amc Any mocap experts that might tell me whether I should have any luck with any of these formats?

-

Hi there, very new to houdini.. I've got an 'IsoOffset' node, with the output type to 'SDF VOLUME' and the mode as 'Ray Intersect', when test geometry is plugged into it the functions succeeding the 'IsoOffset' (trails, connected points etc) work fine! - but when I plug MoCap data into it they don't work and the node gives no error either. Is there a button which to needs to tell the ISOOFFSET node that this is moving geometry? About the MoCap data - I've tried this setup using both 'Object Merge' into the ISOOFFSET node, and the standard nodes that come with it when you import Filmbox FBX into the ISOFFSET node. many thanks

-

I'm having a lot of success cleaning up mocap data in Houdini except for doing things like polishing up and exaggerating the data. The animation layers in Houdini seem like an ideal tool for this task, however I can not seem to get animation layers to work with data that is already in chops format and work with an already established chopnet. I have tried making a chopnet for animation layering at a higher level in /obj and baking the channels to keyframes, and I can then make changes using animation layers. However I don't like this workflow as it's destructive. Has anyone else had any success with using animation layers and mocap without having to resort to baking it all down to key frames?

-

I am trying to work with Mocap data that I have recorded in Vicon Blade. I have an FBX of the solving setup that I have imported into Houdini and I have been able to work with it and get the setup where I want it to be, but I am running into an issue with file size. I am currently sitting at 189MB and I am pretty sure that about 95% of that is keyframe data. Unfortunately when I start working with the file it explodes in my memory and is becoming unwieldy. I am talking in the 25gb range. The Motion Capture data has placed a keyframe at every frame for all of the bones, which is definitely overkill. Is there any way of automatically reducing keyframes in Houdini? I have seen the tool in some other packages, but I am trying to figure out how to do it here. If there isn't a built in way to do it, is there any way I can delete keys in CHOPS, because I could see writing a VEX expression for doing it. Something along the lines of calculating the slopes and if the slope from one keyframe to the next is below a certain margin, delete. Or hell, I could just check concavity at each keyframe and only keep the ones where it changes, but I need to know if there is a way to delete the keyframes.

- 3 replies

-

- Motion Capture

- mocap

-

(and 2 more)

Tagged with:

-

Software Developer: Computer Vision & Mocap - Digital Domain Vancouver Job Title: Software Developer: Computer Vision & Mocap Department: Technology Reports to: Global Production Technology Supervisor Status: Exempt Classification: Full Time (Staff) Purpose of the job: The Software Developer: Computer Vision and Mocap will help to create leading edge tools for performance capture and character creation. With multiple motion capture facilities including advanced facial capture suites, Digital Domain needs software developers with experience in both computer vision, motion capture and character animation. Essential Functions/Responsibilities: • Design, implement, and support C++ and Python tools, primarily for Maya but also potentially standalone, that relate to character animation and motion capture • The developer will be expected to: gather requirements from the artists, research and develop a plan of attack, implement a stand-alone program or plug-in (using C++ or Python), document the code and produce a user manual, and release and support the tool. • The ability to interact with non-technical people and both understand what they want, and get them to understand what you are doing, is necessary • Experience with any or all of character animation, motion capture, real-time processing and/or GPU programming is not required but would be a plus Qualifications: Education and/or Experience Required: • At least a Master’s Degree in Computer Science / Math / Physics • Adept in both C++ and Python • Experience with Maya- both using Maya, and writing Maya plug-ins • Experience with the latest in computer vision. A candidate should be comfortable with optimization techniques (like bundle adjustment) and image processing. • A solid foundation in mathematics. This job will require that you be able to read, understand, implement and make improvements on the latest research. You should be able to read a paper from SIGGRAPH and understand it. Specifically, understanding of optimization techniques and calculus is essential. • A broad knowledge and curiosity about computer graphics is very important. We solve many, varied problems at Digital Domain. This job will not be about just computer vision and motion capture. Working Conditions and Environment/Physical Demands: • Office working environment. • Hours for this position are based on normal working hours but will require extra hours pending production needs. • Walking/bending/sitting. The above statements are intended to describe the general nature and level of the work being performed by people assigned to this work. This is not an exhaustive list of all duties and responsibilities associated with it. Digital Domain Vancouver management reserves the right to amend and change responsibilities to meet business and organizational needs. To apply for this position submit an application at www.digitaldomain.com/careers/ Please select Vancouver from the location drop down menu

-

- Software Developer

- Mocap

-

(and 6 more)

Tagged with:

-

Hello to everyone I want to transfer .bvh to .cmd file with mcbiovision command line tool but I don´t understand very well the syntax mac-pro-de-jms:~ JMS$ mcbiovision -e -c -o -j /walking_2.bvh /walking_2.cmd Unable to open /walking_2.cmd for reading mac-pro-de-jms:~ JMS$ mcbiovision -e -c -o -j ./walking_2.cmd Usage: mcbiovision [-e -b -d] [-c] [-v] [-j object_script] [-o -w -f -s -i [-n -t -l chainfile]] infile [outfile] This program converts a BioVision motion file to a Houdini script & channel file(s). Run the script to create the geometry and channels. -e Parms are stuffed with expressions (chop, default). -b Parms are left blank. -d Parms are left blank and override channels created. -c Only create the channel file(s). -v Reverse joint rotation order. -o Create character using object joints. -s Name bones using only the end joint name, instead of combining start and end names. -w Create character using object joints + wire polys. -j Set the object creation script (eg "opadd geo %s"). -f Create character using Forward Kinematics (default). -i Create character using Inverse Kinematics: -n Specifies bone length channel type (FK,IK only). 0 = none, 1 = always, 2 = auto). -t Dual bone chains with twist affectors (IK only). -l Specifies a file containing a list of bone chains (IK only). Each line of the file must be of the form: <IK Chain Root Joint> <IK Chain End Affector> mac-pro-de-jms:~ JMS$ mcbiovision -e -c -o -j /walking_2.bvh -o walking_2.cmd Unable to open walking_2.cmd for reading mac-pro-de-jms:~ JMS$ mcbiovision -e -c -o -j /walking_2.bvh /walking2_ Any one knows how to do it, I´m pretty sure that it´s really easy and iI'm sure it's my fault. greatly appreciate it Thank you all in advance

-

Hello, I know mocap have been talk several time on the forum, but I didn't found the answer to my question. I'm trying to map mocap to a character rig, but I cannot find the spine bones to link them to the mocap skeleton. Where are they, if they are any ? I cna see the controller, but I can't link them... I think it will be the same for the head ? I know it's probably a noob question, but that is what I am Thanks Doum