Search the Community

Showing results for tags 'deep'.

-

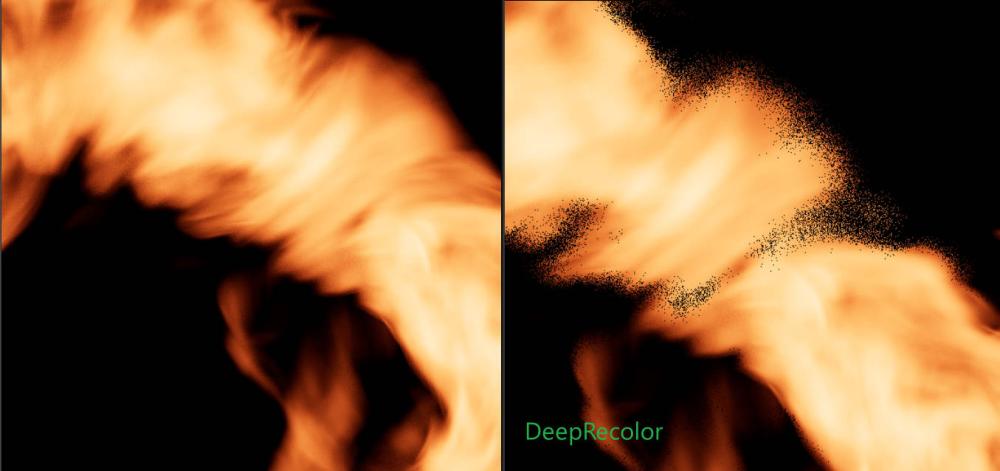

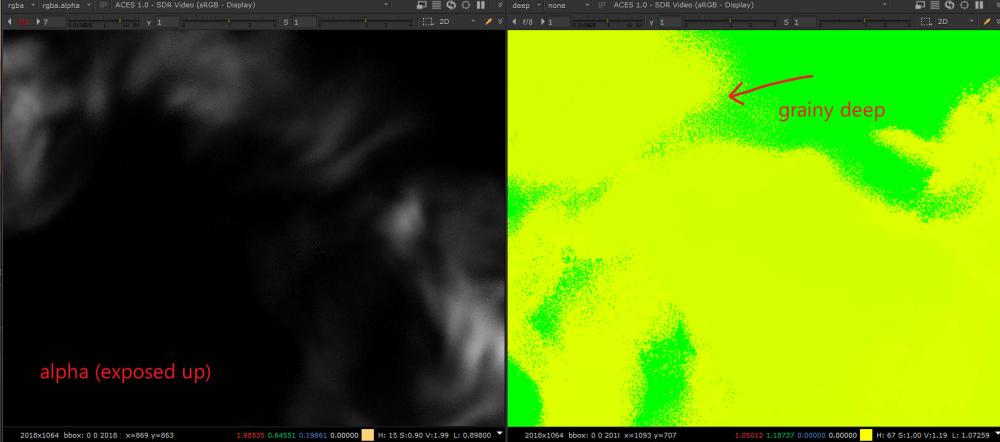

Hi all, I am seeking suggestions we would like to render fire with Deep output for comp but facing issues in productions. scenario: 1) Karma as the production renderer. H20.5 2) DEEP output with NO matte holdout setup in 3D/Houdini is preferable. (to exchange artist time of setting up holdout with render time and disk space) FX dept provides shaded fire as VDB, depending on the shots and type of fire, the fire density can be low or high, which yields following issues: Issue 1 - lacking alpha or color correction mattes: varying fire density translates to less or more solid alpha. Compositors needs to pull custom mattes to color-correct the fire for desired look. Is there a recommended approach that can be done either in FX or LIGHTING that would provide proper mattes for the fire? Issue 2 - DeepRecolor and DeepHoldout: when the fire density is low, the DeepRecolor-ed RGB is unusable (see attached pic), which makes writing out Deep for holdout a moot. So, in productions where Deep output is allowed/preferred, I would like to learn what I am missing in terms of setting up the fire (in FX) and rendering the fire (in LIGHTING) so comp can do accurate holdout and color-correct the fire easier (with mattes provided, NOT pulling luminance matte in comp)? PS. if the issue has to do with how the pyro shader is setup, please share thoughts like I don't know much about it.

- 1 reply

-

- fire

- karma rendering

-

(and 2 more)

Tagged with:

-

Hello Magicians! I was wondering, is it possible to save the deep EXR from render view? Let's say your render works properly locally, but on farm it crashes all the time. Nobody knows why. So, you render the frames locally and save it in to the sequence folder. Problem is, deep is needed as well. How do you do it? Maybe it is super obvious, but I can not find it right now. Thanks for any answers already, wish you low render times. Cheers.

-

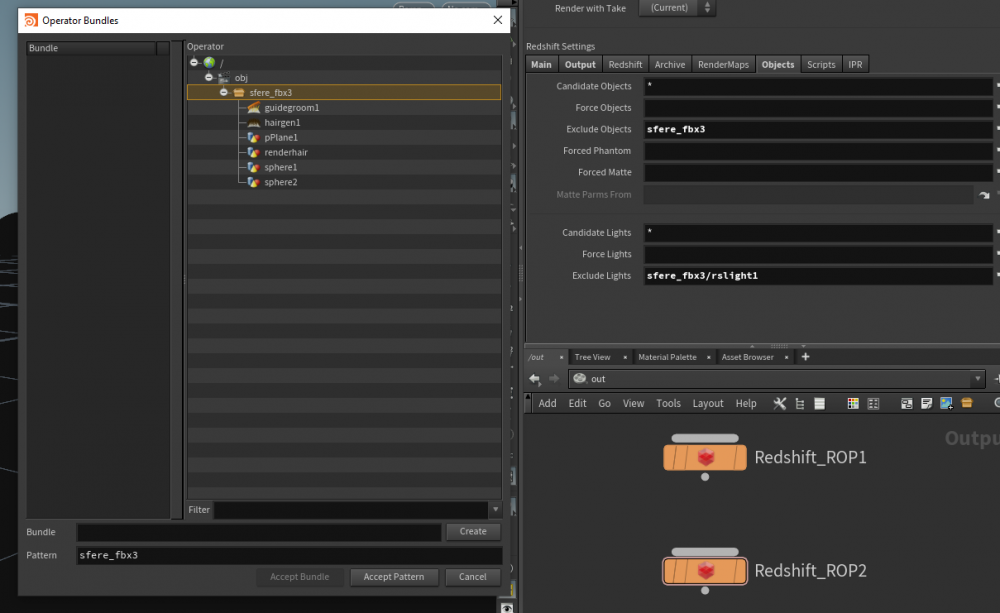

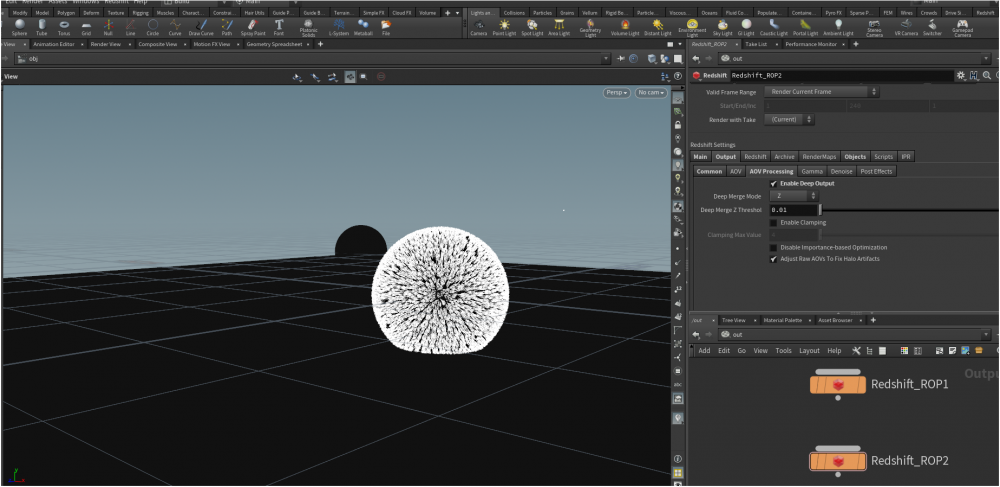

Hi, I'm trying to render the deep aov with redshift, but when I export it as an .exr in nuke through the deepread node, it says that I don't have any data regarding the deep layer, when I render the pass in Houdini it's just black, but it should somehow get the informations about the geometry location in viewport, am I missing something important related to the camera or the objects/matte exclusion in the redshift rop node?

-

Hi everyone, so I have been working with some renders of particles that have a material assigned to them with 0 opacity. The problem comes when I try to render out as well a Deep Camera Map and bring it to Nuke. Using the usual workflow with deep images, I am able to see the particles only when I use the DeepToPoints node in Nuke - prior to that I don't even get an Alpha nor I can see them. Is there any way I can export a map for the deep so I can make holdouts with this? Thank you!

-

Hey Guys, So I am doing a bit of an experiment. I haven't worked with DCM and DSM in Houdini much so I could use some information. I want a "Full Deep" (not sure if thats the term) of my image. I want each sample of my deep too store my rgba at the z sampled. The result should be when I DeepCrop this in nuke as I slice back and remove objects in front of background objects this reveals the pixel information behind that object. I initially tried with DCM and the DeepEXR works great but it does not seem to sample my e rgba of the object behind this. I read through the properties and tried some stuff but I feel like I am missing a step to get this result. EDIT: I understand this is prob not a standard application but is there a way to store that rgb information at each sample of the z? Any Solutions? Cheers! particles_example.hip

-

I am working with the sea of cloud.It takes me too mach time to rendering it,so I want to render this cloud seperately and composite them by using the DEEP Camera Map,but there is no relationship between clouds,because they are rendering seperately.I want to know if there is a solution to add the shadow to the cloud. secondly ,what's the difference between Deep Shadow Map(DSM) and Deep Camera Map(DCM)?

-

Hey guys, I was wondering if it is possible to export exr images with deep data information in them. It would be to use those in Nuke later for Deep Compositing. Also, I would like to know what is the difference between exporting a fully textured model from an explosion or a fire. Cheers! Trapo.

-

I have experience using deep rendered images as a compositor at large facilities, and while the file size is large, the ability to work in nuke while slower is still functional and the benefits of the deep render outweigh the file size and comp slowdown. I am doing some testing with Houdini for a whitewater sim and the DCM output is gigantic.. depending on the particles on screen, file size maxed at 3.2 GB for an exr frame. Nuke needless to say can't handle this, even Houdini's output of a DCM with a relatively small amount of particles will bring Nuke to a dead crawl. It seems like there are quite a few DCM settings buried. Does anyone have any experience on optimizing the DCM settings to get a result that is good but saves on file size and computing for Nuke? Cheers, Ryan

-

Hello, I am struggling with adding all deep render properties inside a mantra node with python. To do so manually via interface is quite simple, I go to node - edit render properties - filter *deep* - add them all. However I am modifying mantra nodes with python and it would be great if I could do the same thing automatically. So far it seems rather tedious - I need to create all ParmTemplates, match their signatures and append to a FolderParmTemplate and set this to the node. Another thing worrying me is menu parameters - I do not need to create interface with menus, can I only set the desired value? Is it equivalent to creating a string parameter and setting it's value to menu item's name (not label)? Thanks, Juraj

-

Hope someone came across this: trying to use a deep exr generated in another package to hold out volumetrics rendered in Arnold. Lacking a global option I was hoping to be able to read it into my shader (possibly through a similar shadow() call of the old Prman/Mantra trick or comparing Rl with deep samples) but so far no luck on even reading a deep exr. Anyone came across this before? Thanks, Andras

-

Hi, We started using deep rendering and noticed nuke scripts literally explodes if we forgot to check "Exclude from DCM" for each extra passes. My problem is, how do we exclude base components from DCM, like Direct Lighting and Indirect Lighting? There is no Exclude from DCM checkbox for those passes. I tried in H14 and H15. Do I need to manually export those passes in the Extra Image Planes section? Thanks Maxime

-

I tend to remember that there used to be a render attribute to chose between continuous or discrete deep image samples. I can't find it in v15. Has it been removed, or am I mistaken that there was an option? thanks

-

Hey Guys, I have been playing with a raytracing shader. The surface I apply the shader on and the actual position I am computing in the shader is quite a faire distance away. It's like I'm looking through a window. All works well and good however I am now looking into implementing Deep data in this shader We can read deep data with dsmpixel. But is there anyway of writing it out from the shader ? At the moment my deep data comes out with the actual plane surface data that the shader is applied to but not the objects and procedural inside the "window" which my shader generates. Do any of you have any clues on how to feed this custom data to Mantra do so ? Is it just an simple array variable to export or a twin array both depth and color/alpha ? Is it even possible ? Thanks for any idea you might have. J

-

hello fellas, lately i been needing a good way to composite smoke on a burning live action actor, i animated a geometry to match the motion of the actor as you might imagine neither the motion nor the proportion match, but most of the time its good enough for sourcing smoke. #ACTUAL PROBLEM# however i find it hard to obscure/mask/matte the smoke since proportion and motion is not a complete match. #CURIOSITY TORMENT# im looking a deep images for the moment( im very new to it) ive tried the DCM seem to be a good candidate for my situation. i got it to work and the recoloring logic, but the file size is huge 700mb for a single exr containing rgba, deep, objid but i dont understand DSM at all, i kinda expecting a depth shadow map looking thing as my lights are also using depth map shadows when i import it in nuke it looks empty.(deep channel only has (inf, inf) for (front,back) deeptopoints crashes as soon as i plug it. please share to me some workflows

-

Hello, I am working on a school project and I am using Arnold in Houdini for rendering. I would like to try deep workflow. But I have a problem with deep sample count in rendered EXRs. I am using custom ID AOVs and when trying to isolate certain objects in Nuke there are artefacts on edges (which is funny because it shouldn't happen with deep data). I think there is a problem with deep samples merging (compression) which is controlled by tolerance parameters. But modyfiing these parameters doesn't take any effect on exported EXR. When samples merging is disabled EXRs in Nuke work like expected but it drastically increases EXRs size (2gb per frame). Any ideas how to find a balance between EXR size and sample count on edges? I don't know whether it is a bug or I am missing something. I have tested it with htoa 1.3.1 and 1.4.0. I am really stuck here. merge disabled: merge enabled:

-

Hello, I am working on a school project and we decided to try deep workflow. But I am encountering many problems as I have nobody to explain me the correct process. Today I managed to solve very weird problem - when I imported into Nuke deep sequence rendered with htoa I wanted to composite a card with texture into it. Because sequence has moving camera imported deep seemed to be flowing in space (I understand why - Nuke aligned deep data to origin so it is flowing because distance changes). The next step should be setting camera input into deeptopoints node. This should transform pointcloud to their static place (geometry is static) . But it did not. When I set camera into deeptopts node the pointcloud changed a little bit but remained flowing in space. I cannot understand why because in theory it should stick to its place. So after hopeless trying of various modifications (changing camera units, importing camera from maya...) we came up with a solution. Now it works but I don't understand why. The solution is really strange but it consists of using deepexpression node and dividing deep data (not color) by number 68. It sounds silly but it solved problem - points are transformed to their correct position. Any ideas why so strange thing happened and why 68?

-

Hi, Do you think is possible to include reflection object in the deep exr ? In theory, this will create a "point cloud world reversed " inside the object . http://en.wikipedia.org/wiki/Mirror thank you, have fun, papi

-

(Apologies for the repost, originally sent this to the sidefx list, thought folk here might have info too... ) Short version: Outputting primid as a deep aov appears to be premultiplied by the alpha, is that expected? Unpremultiplying helps, but doesn't give clean primid's that could be used as selection mattes in comp. Long version: Say we had a complex spindly object like this motorcycle sculpture created from wires: Comp would like to have control over grading each sub-object of this bike, but outputting each part (wheel, engine, seat etc) as a separate pass is too much work, even outputting rgb mattes would mean at least 7 or 8 aov's. Add to that the problems of the wires being thinner than a pixel, so standard rgb mattes get filtered away by opacity, not ideal. Each part is a single curve, so in theory we'd output the primitive id as a deep aov. Hmmm.... Tested this; created a few poly grids, created a shader that passes getprimid -> parameter, write that out as an aov, and enable deep camera map output as an exr. In nuke, I can get the deep aov, and use a deepsample node to query the values. To my surprise the primid isn't clean; in its default state there's multiple samples, the topmost sample is correct (eg, 5), the values behind are nonsense fractions (3.2, 1.2, 0.7, 0.1 etc). If I change the main sample filter on the rop to 'closest surface', I get a single sample per pixel which makes more sense, and sampling in the middle of the grids I get correct values. But if I look at anti-aliased edges, the values are still fractional. What am I missing? My naive understanding of deep is it stores the samples prior to filtering; as such the deepsample picker values returned should be correct primid's without being filtered down by opacity or antialising. As a further experiment I tried making the aov an rgba value, passed Of to the alpha, then in a deepExpression node unpremultiplied the alpha. It helps on edges, but if I then add another deepExpression node and try and isolate by an id (eg, 'primid == 6 ? 1 : 0'), I get single pixels appearing elsewhere, usually on unrelated edges of other polys. Anyone tried this? I read an article recently were weta were talking about using deep id's to isolate bits of chimps, seems like a useful thing that we should be able to do in mantra.

-

Hi, guys. I'm trying to play around with deep passes from Houdini 13.0.260 in Nuke 8.01. So, I have two issue related to that. One was discussed early which is black view port on deepRead node. (solution is deepToImg with check-box off) Second issue is Nuke crash when I try to apply deepColorCorrect node after my deepRead. I anybody encounter this problem and is there possible solution? Edit: Also crash on apply deepHoldout node.

-

Hi Everyone, I am facing the problem that I do not completely know how to render out deep data. I am setting the deep resolver to filename.exr in the mantra node, but it doesn't seem to be rendered. Also, how would you assign a material to an entire alembic imported network? Thank you very much in advance. Cheers, Jonas Jørgensen