Search the Community

Showing results for tags 'pdg'.

-

Hello ! I'm trying to learn a bit of PDG since it might be useful for some of my projects. But I'm having a few issues For one, I wasn't able to find how to remove a work item. What I'm trying to do is search for a string in a bunch of files, and based on that I'll do some other things. Right now I'm simply trying to get to find the string in the files, but first I need the relevant files. So I use the filepattern node for that, but there is a few files for which I don't want. I use a pattern for the extension (*.txt), but there is some files with a specific string (in this case "proxy") in the filename that I would like to ignore. I tried something like "thePath\*.txt ^*proxy*" to get all the txt files without the ones with "proxy" in the name. Didn't work, maybe the syntax is wrong, but moving on. So I have a bunch of files (aka work item) that I want to get rid of. I tried using the split node, but I don't know how to work with PDG attributes. It always either error out, or gives me this I tried "@filename="file.txt" | @pdg_filename="file.txt" | I tried without the quotes, I tried with backticks around the filename part, without any success. What's the correct syntax for this ? I tried reading this, but without much results. I would need an example to understand it. So using expressions didn't work. I then moved on trying it out with Python. There is the "work_item.dirty(True, True)" command, which works as I want, I see the work item being removed in the node UI, but then Houdini freezes, then crashes. So I'm seemingly out of options. There is multiple questions, which are as follows : 1 - Is there a syntax for the file pattern that allows to get all .txt files, without the ones with a specific pattern in the name ? (mockup - "*.txt ^*proxy*") 2 - How to use PDG attributes in a node, as part of an expression ? Kinda like something simple like "@Frame=2", but in this fashion '@filename="file.txt" ' 3 - Why does the work_item.dirty() python command crashes Houdini ? Am I doing something wrong ? Test file attached. The zip contains some random .txt files with names to test out what I want, along with the hip file. pdg_test.zip

- 6 replies

-

- remove work item

- top

-

(and 1 more)

Tagged with:

-

Trying to get a string attribute from the selected workitem to evaluate as a parameter. Integers and floats work with the @attrib_name syntax, but it seems strings do not? I've tried backticks, evals(), combinations of quotes - no joy. I've had to do a workaround where I interpret integers as strings later but that seems so unnecessary. What am I missing? I've included a very simple hip. Thanks in advance! pdg_str_attrib.hiplc

-

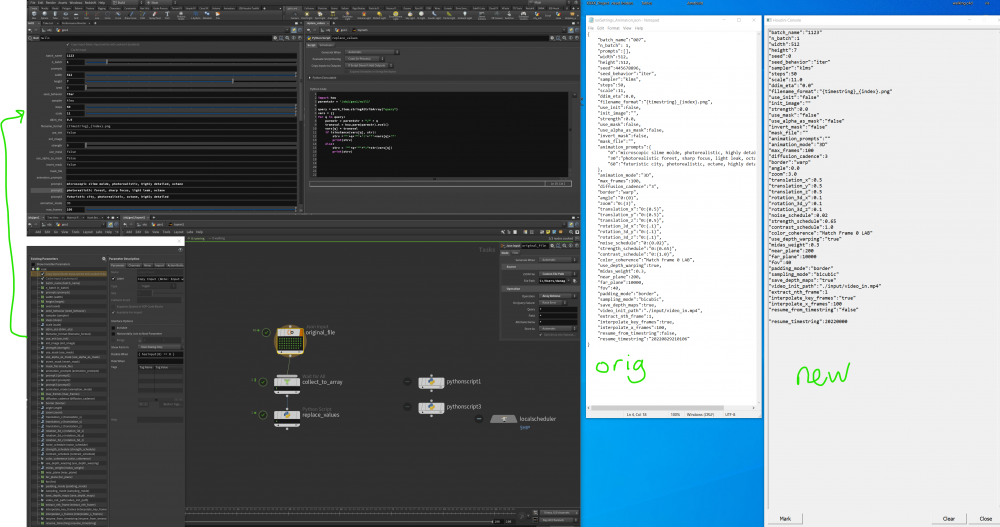

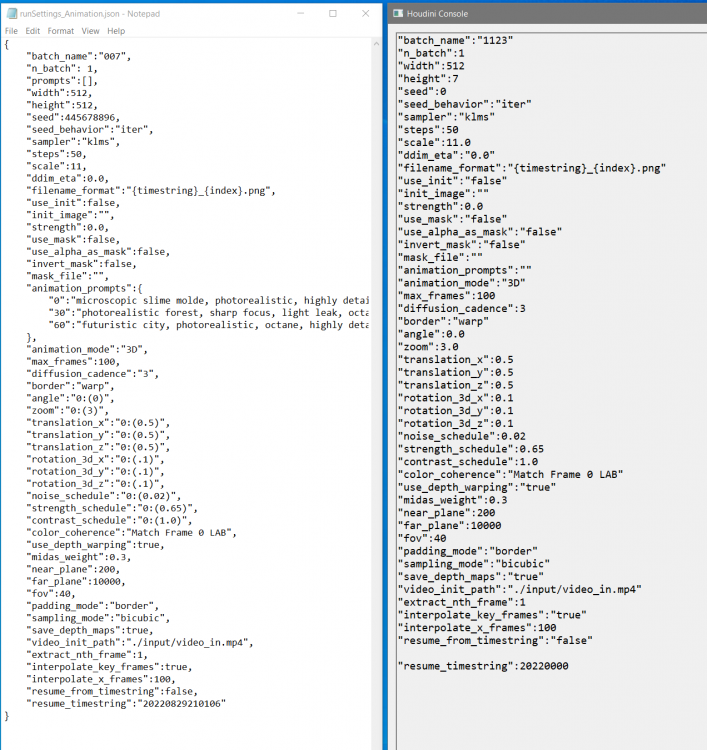

Hey All, I'm trying to customize a python settings.json file with int, float, bool, and sting values set up on a Null control with parameter sliders. I've read in the initial file I want to modify with a Json file, and have matched all the tags with the slider controls. I'm then collecting the tags into an array called query and trying to replace the original values in the .json with the slider control values. This is what I have so far (python novice), but I feel like there is a more solid way to do this. I know I'm not actually storing any files or values, I'm just printing to console for now import hou parentstr = '/obj/geo1/null1' query = work_item.stringAttribArray("query") vars = {} for q in query: parmstr = parentstr + "/" + q transval = hou.parm(parmstr).eval() vars[q] = transval if isinstance(vars[q], str): strv ='"'+q+'"'+':'+'"'+vars[q]+'"' print(strv) else: strv = '"'+q+'"'+":"+str(vars[q]) print(strv) There's also one section "animation_prompts" that goes one level deeper and would need some sort of formatting love where I need to indent and put in a few other lines. Thanks in advance! -Dana

-

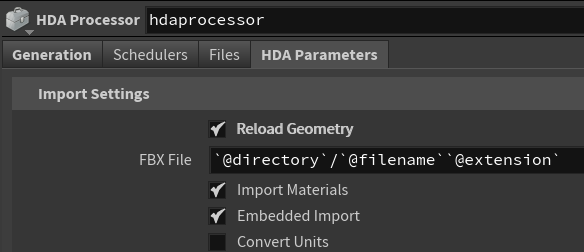

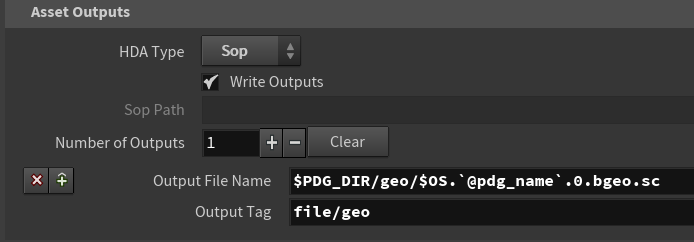

Hello there! The task is to go through folders with lots of fbx files (each with several assigned textures and materials) and render out thumbnails of each object. Simple enough. The problem is that I can't find a way to do this without loosing the texture and material data supplied by the FBX Importer SOP node, so the resulting renderings turn out gray, without textures. My approach is to start with a filepattern that gets wired into an HDA processor with a Labs FBX Archive Import inside and the relevant parameters exposed: The FBX Archive Importer neatly connects up all the different materials each of the fbx files has, but this data gets lost when I wire in the ropkarma node afterwards (which makes sense, I guess, as that HDA processor should only output geometry:) Now my question is: how do I get the correctly assigned texture and material information to the karma renderer? I feel like I'm making an obvious mistake but can't figure out how to do it. The only other solution I came up with is baking all the Maps into a single map and then using those baked maps down the line, but this seems overly complicated, time consuming and I lose texture fidelity in the process. How do you go about importing and rendering loads of files with loads of (unknown) numbers of assigned textures? Thankful for anyone pointing me in the right direction. Cheers!

-

Hi There, I'm trying to grab all attributes on the current work item in TOP and assign them to points in SOP. I wrote a python script and it works fine in SOP lively but I'm getting an error or crush when I kick off ROP Geometry Output TOP. I used python approach because I have several attributes to transfer and the attributes can be changed in the future so I'd like to avoid using @xxxx with hardcoded attribute names in SOP. It would be great to have some suggestions. Thanks you ~

-

Hello; I'm just getting into PDG and have an interesting problem I'm trying to solve. I have a list of files that I'm trying to sort using PDG. The files are labelled x0y0,x0y1,x0y2.....x7y7. When I load them with the "filepattern" TOP, it sorts them as x0y0, x0y1, x0y2, x0y3 etc. Instead, I would like to re-sort them as x0y0,x1y0,x2y0,x3y0,x4y0 ..... x0y1,x1y1,x2y1,x3y1,x4y1 etc. What would be the best approach for this? Any python algorithm that may be suitable for this that can be executed in TOPS? Maybe a feedback loop?

-

Hi Guys, My Query is related to PDG service Manager,I ahve two questions 1)how exactly does the pool size work in PDG services ?the help says "Sets the number of instances that will be created for the service pool." are these number of instances ,number of cores allotted to the work items?I am very confused here,any clarification will be helpful. 2)After creating a service for ex/ a "ROP Fetch Service" it takes some time to start the service ,what is the delay about?I have to wait until the service lights turn green before cooking the node with service on

-

Hi I'm spending the day figuring out how to use TOPS. How would I connect the Heightfield Output node to a wedge? I can override filename but it doesn't cause it to render. Cheers TOPStest.hiplc

-

Hi, OK my scene is roughly a PDG setup with some work items, those have all been merged together into a single work item where all the attributes are merged into arrays. I am looking to access those attributes using component syntax ( @pdgoutput.1 or @pdgoutput.2 ). This works when I manually type in the component pull. I cannot get this to work in a For Number loop using the iter count. How can I build an expression that uses the string of the first part of the attribute ( "@pdgoutput." ) and concatenates it together with an detail interger in a SOP loop like @iteration? I have tried such expressions @pdgoutput.`detail("/obj/geo/merge1","iteration",0)` or even strcat("@pdgoutput.",`detail("/obj/geo/merge1","iteration",0)`) Though these all resolve to 0 I have thought to also try something like atof() though that wasn't working for me either. Does anyone know how to work up pdg array attributes in an expression like this? Is there a better way to do this?

-

How can i export all wedge attributes and custom attributes i created along the line into a single csv? I made this work with CSV TOP node but i had to manually write the attributes. Using option 'By Attribute' i can specify 'wedgeattribs' as the attribute array to read from, but the other custom made attributes do not appear there Also tried in a python script work_item.attribNames() but this gives you every attribute listed - even the ones created automatically like the value type for each wedge attribute

-

Hello everyone, I have a pdg setup where I will have a large number of attributes piped in from a csv sheet, and I would like to convert every attribute ending with _prob to floats. My issue is I can't figure out how to iterate between all attributes to check their names and cast I would like to do something resembling the following: foreach attribute if "_prob" in attribute.name cant attribute to float I've been looking for quite some time and can't find a solution, if anyone could nudge me in the right direction it would be great Thanks in advance!

-

Iam am trying to execute the example file top_"mayapipeline" from the houdini help directory,but the top throws an error when I check Image magick it gives me error [12:47:39.497] Executing command: convert "C:/Users/user/AppData/Local/Temp/houdini_temp/mayapipeline/pdgtemp/render_1.png" -background black -resize 128x128 "C:/Users/user/Desktop/Desk Record/TUT/pdg/top_mayapipeline/images/mayapipeline.scale_down_render1_310.png" Invalid Parameter - /Users Now the renders setup above imagemagick happened ,but scaled down image supposed to be generated by imagmagick hasn`t. I checked and reinstalled Imagemagick,it`s working in other top networks Any idea why this could be happening?

-

Hi, truing to render meta data when rendering my images. Something like bounds, colors, etc etc: Similar to: { "bounds_max": [ 0.9976696968078613, 0.5660994648933411, 1.2734324932098389 ], "bounds_min": [ -1.0025358200073242, -1.335376501083374, -0.9231263995170593 ] } Currently I write meta data to a json-file using a python script inside my "obj/the_thing_to_render" network. It works fine but now want the same data to be output when driven from a PDG network. All in all, trying to find an easy way to write out some data for each variation I render. (i.e. renderer_image_wedge_045.exr should have file meta_data_wedge_045.json). Anyone have experience with this or have some nice pointers to have to do this in a clean way?

-

Im trying to establish better link with Substance and Houdini , trying to get the best of both worlds. Basically, if I create and get some asset a look made in substance of a given procedural shader like an edge and scratch procedural wearing material recreat live the mesh dependency map directly in Houdini, without any export, baking in substance, importing back blablabla... When I link that AO and curvature with an external prebake EXR map it's work (after I have to fix a naming error in the Lab tools) but not when I try to "live" connect. Like shown above The image plane doesn't seem to be recognized and taken into consideration in the SBSAR processing? Ideally at the end, processing every asset with PDG... Anyoen has done PDG map baking for an all scene ? Im joining my hip and test substance file I quickly created.. Maybe it's a limitation of substance archive? It can't only take baked, but I guess if so we should be able to trick it isn'it? Many thanks guys ________________________________________________________________ Vincent Thomas (VFX and Art since 1998) Senior Env and Lighting artist & Houdini generalist & Creative Concepts http://fr.linkedin.com/in/vincentthomas Substance_automation_vince.hip testhoudini2.sbsar testhoudini3.sbsar

-

Hi magicians, I'm having issues with TOPs on a client machine, and he asked me if we can have each TOP output (green dot) per frame, this will solve the issue and they will also set Deadline easily. That would be something like 1 green dot = frame 1 2 green dot = frame 2 What I tried so far is having a rop geometry cache for every OBJ, that gives me the geo itself per frame, but there are certain things like the HDRI dome that I'm controling per CSV TOP attribute to change .jpg background, that I can't set per frame There's any way to read each TOP result per frame? only way I can switch is clicking each green dot: and I need the variables changing per frame: Thanks!

-

Hey magicians, anybody experienced this error with TOPs? "Work item ... as an expected output file, but it wasn't found when the item cooked". It works fine on my machine, but when I pack the scene (via archiveproject node) and run on my client farm, it shows this error. I did some troubleshooting and when the redshift proxy is off, it renders properly, any thoughts? Thanks!

-

I made a setup where I'm wedging a flip simulation and generating one flipbook per simulation. In the end I combine them using imagemagick. I'd like to know if it is possible to wait for each wedge to end generating the flipbook in order to start the next simulation and only when it is all done generate the mosaic with imagemagick? As it is now, TOPs calculate 4 simulations simultaneosly then creates 4 flipbooks also simultaneosly and only in the end it joins all the flipbooks together. My problem with this method is that sometimes I run out of memory...

-

Afanasy 3.2.1 version supports TOPs: https://cgru.readthedocs.io/en/latest/software/houdini.html#afanasy-top-scheduler

-

Free video tutorial can be watched at any of these websites: Fendra Fx Vimeo Side Fx Project file can be purchased at Gumroad here: https://gumroad.com/davidtorno?sort=newest

-

Hey folks, I'm executing a Windows .bat file which is written out by a pythonprocessor TOPs node to run some things on another sever. Everything works if I hardcode the filename to the batch file, but I want it to generate and execute .bat files for each work item I'm trying to access `@filename`in the pythonprocessor, but not getting anywhere. How can I access the attribute so .format fills in the path correctly? PidginCode: outFile = `@filename` filePath = "path to directory/{}".format(outFile) The sample file docs are bereft of examples so I'm wondering if I'm going about it the wrong way. Thoughts? EDIT: I made a string parameter on the node I'm querying and pointed evalParm at it. Is that the most sensible way? node = hou.node('/obj/topnet1/nodeName') parmName = str(node.evalParm('parm'))

-

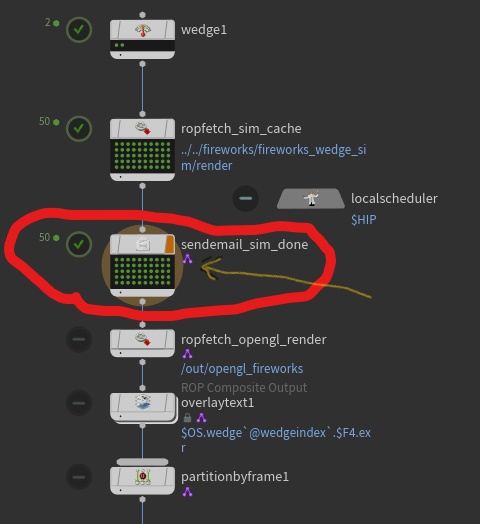

Hi, I have a this TOP PDG graph that sends an email. (actually the problem is that it sends too many emails) I'm currently testing it. So it: 1. does wedge (done) 2. runs a sim (done) 3 send an email (problem here, it sends an email but sends an email for each work item of the sim, in my case it sends me 50 emails!).. I only need 1 email telling me that all the sim work items have completed. I also need it to be able to continue down the chain like this, with it only sending 1 email. So the idea is to have it do the sim, send an email when the sim is all finished, do a render, send an email when the render is all finished, etc. *I also note that little purple icon on some nodes. What do those mean? I notice the purple icon first appears on the send email top in the chain. EDIT: I was reading the documentation and found that send email is intentended to be used after a Wait For All top or at the end of a large network. So now I'm just trying to find a way to "branch off" and send an email "off to the side" sort of speak. Also found info on the purple icon: When a TOP node is dynamic, a purple badge appears on the node.

-

- send email top

- tops

-

(and 3 more)

Tagged with:

-

Hi all :), I am trying to load two sequences of vdbs on my disk, for example : cluster0.$F4.vdb cluster1.$F4.vdb with a geometry import in a top network but it does not work so far. I have tryed to load them with the filerange / filepattern node and partition them into 2 work items, cluster0 and cluster1, but i got all frames at once or nothing in the geometry import. I also tryed to create a @path attribut with a $F4 but the top network does not seem to update the frames so i only have the first frame of each cluster.

-

Hello, I am currently trying to create a terrain using TOPs. However, some of my HDAs created for it use camera data via the ndc VEX function, for example all scatter points outside the camera angle are deleted. But now when I add this HDA to my TOPs workflow, the camera is not recognized, and Tops takes Default Camera Values instead (See attachments). Is there a way to make the HDA processor recognize the camera data? example.hipnc examplewithHDAs.zip

-

Hi Everyone, I'm trying to setup a TOPs network to cache out sims with multiple parameters being sent to the dop network. Seemingly basic stuff, however nothing I'm doing seems to be working how I think it's going to work. What I've tried: Using a wedge node to set a random attribute and trying to use that attribute in an exterior dop network - caches into a view able geo rop output but all the wedges were the same Rebuilding the pyro sim purely in the tops network - it makes something but when viewed all the frames appear empty Have the source inside the tops and the dop outside - same result as the first

-

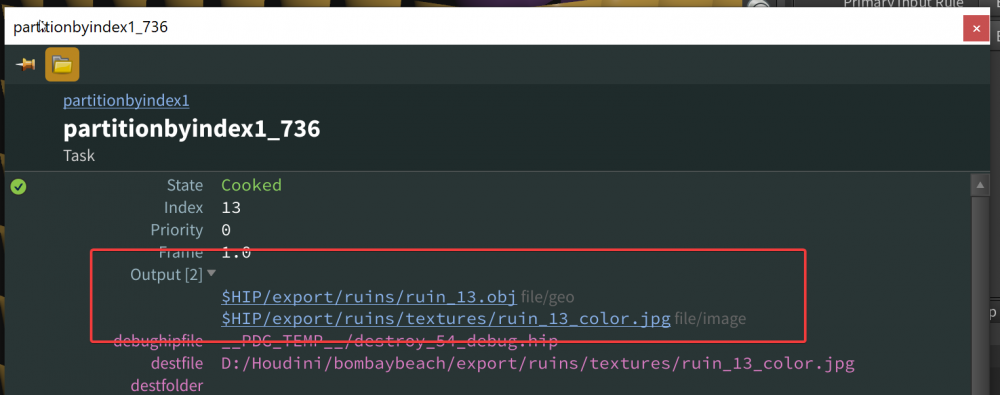

In PDG network, how can I access Outputs (see image) of a preview node withing Python Processor context? In other words how can I access @pdg_output or workItem.output from Python Processor node? Update Feb 23, 2:13 pm I tried the following but it prints an empty array. for upstream_item in upstream_items: new_item = item_holder.addWorkItem(parent=upstream_item) print(new_item.inputResultData) Update Feb 23, 2:24 pm (Solved) After struggling with this for 4 hours I finally was able to figure it out by reading HDAProcessor code located at C:\Program Files\Side Effects Software\Houdini 18.5.408\houdini\pdg\types\houdini\hda.py It seams like most of default nodes store outputs with item.addExpectedResultData(...) call. So to get output values of a previous PDG node for upstream_item in upstream_items: new_item = item_holder.addWorkItem(parent=upstream_item) parent_outputs = new_item.expectedInputResultData I hope it helps someone.