Search the Community

Showing results for tags 'hqueue'.

-

I'm trying to get hqueue with houdini 20 and redshift to run and I keep getting this error when I submit my testscene: Any ideas on how to fix that? Or what the problem could be? File "<stdin>", line 21, in call File "c:\hqueueserver\python27\lib\site-packages\pylons-0.9.7rc4-py2.7.egg\pylons\controllers\core.py", line 204, in call response = self._dispatch_call() File "c:\hqueueserver\python27\lib\site-packages\pylons-0.9.7rc4-py2.7.egg\pylons\controllers\core.py", line 159, in _dispatch_call response = self._inspect_call(func) File "c:\hqueueserver\python27\lib\site-packages\pylons-0.9.7rc4-py2.7.egg\pylons\controllers\core.py", line 95, in _inspect_call result = self._perform_call(func, args) File "c:\hqueueserver\python27\lib\site-packages\pylons-0.9.7rc4-py2.7.egg\pylons\controllers\core.py", line 58, in _perform_call return func(**args) File "<string>", line 2, in deleteNetworks File "c:\hqueueserver\python27\lib\site-packages\pylons-0.9.7rc4-py2.7.egg\pylons\decorators\rest.py", line 33, in check_methods return func(*args, **kwargs) File "<stdin>", line 60, in deleteNetworks ValueError: invalid literal for int() with base 10: 'new_1'

-

I have one Houdini fx and an indie version of Houdini. When using hqueue, when I send a job from Houdini fx, it shows "waiting for resources" message and doesn't work anymore. Can't hqueue work with the indie version of the Houdini engine? Any help would be greatly thank you.

-

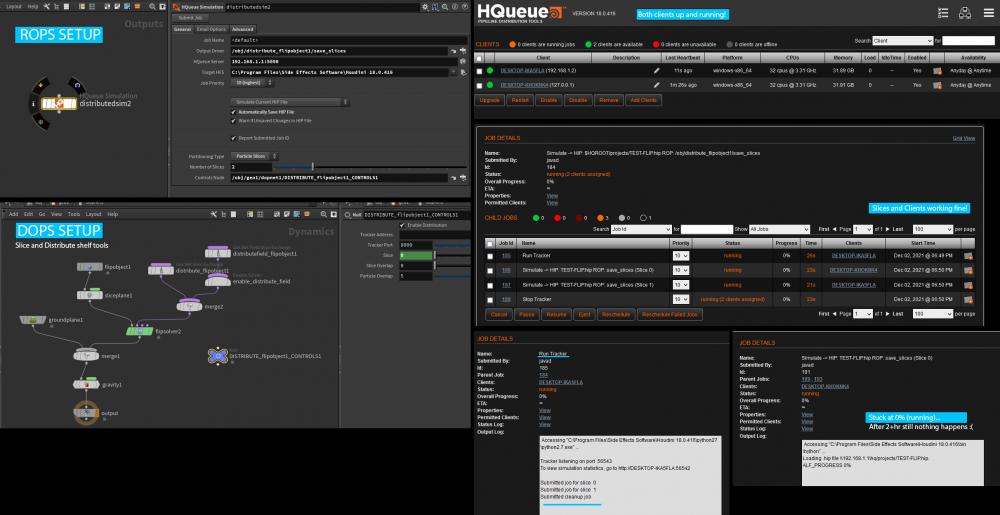

Hi! I have 2PCs that is setup correctly with HQueue! HQueue render works fine, also Simulation with no slices work fine, but as soon as I use slice and distribute shelf tools for FLIP and send it to HQueue job doesn't start working, it stuck at 0%, I attached the screenshot! does anybody know what could be the problem? Thanks!

-

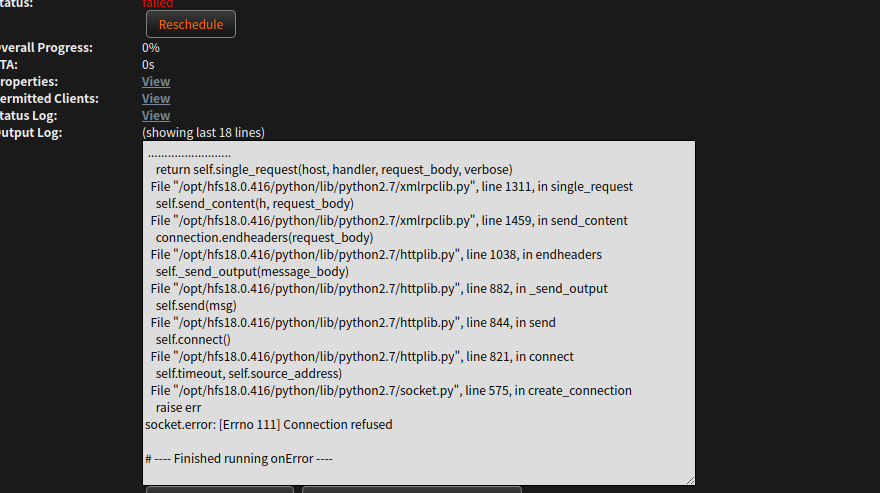

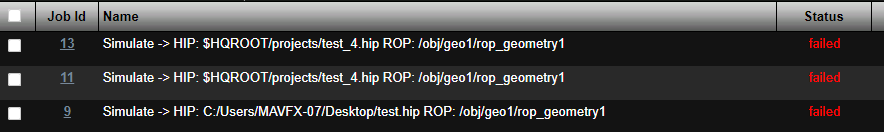

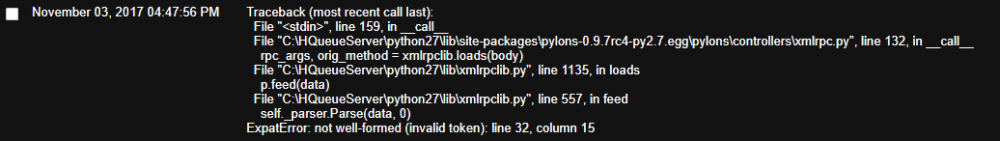

Hi guys, i'm "trying" to configure an hq server and client on several machines and it's a nightmare.... when i submit a simulation, the job starts, i see my (for now) 2 clients but every time , the job failed !! I don't even know why and when i look into the report i don't understand where the error is !!! I think it's a path problem but i have a NAS for the sim, all the client work and see the NAS, we have backburner since 10 years and it works but in the configuration of the hqserver.ini and the hqclient.ini i don't know what i need to put on the share path, etc.... If someone can help, it would be nice. Regards. job_2_diagnostic_information (7).txt

-

Hi, I was wondering if there is a way to distributed sparse pyro simulation. You can easily distribute with legacy pyro by distributing the container by slicing. I mean yeah... there is a predefined container that gets sliced in legacy but sparse create bounds on demand that is why it can't distribute in sparse since there is no predefined container(bounds). But has someone cracked this...? Simulation sparse on multiple machines

-

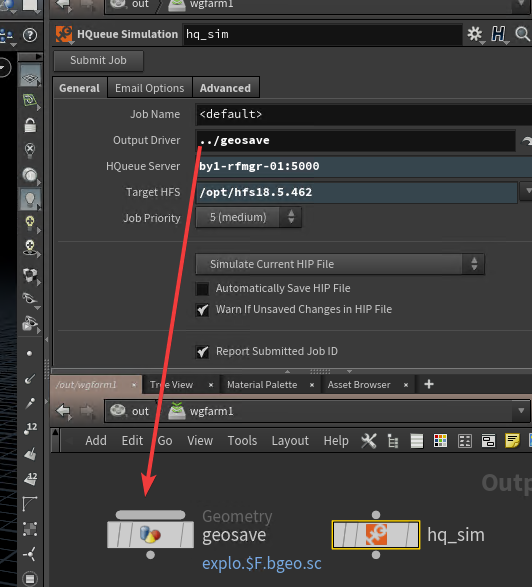

Hi I'm trying to create asset that submits a scene to HQ and i've faced a problem inside my asset i have a reference from one node to another (see picture) when this asset is unlocked everything goes fine...but when i lock the asset then hq gives error message "ERROR: Cannot find node '/out/wgfarm1/geosave'" in this case

-

Hi ! We use redshift for render and everything is ok, but We'd like to use some machines without GPU to simulade DOPs or generate geometry in Hqueue The problem is that since these machines don't have GPU, they obviously fail to load redshift dlls and OTLs. Hqueue gets an error and fails to process this hip file. None of redshift OTLs is used or involved in simulation somehow, but hqueue fails anyway just because some unknown operators present in scene. I used to solve this problem in linux by installing some nvidia libs into system, but i can not solve it under windows this way..... And the question is : Are there ways to force hqueue to ignore this type of errors and process hip file any other advices will be appreciated thanx in advance!

-

- 1

-

-

- hqueue

- dll errors

-

(and 2 more)

Tagged with:

-

i'm making a ocean plane. displacement is working when I open houdini and render. but submit that hqueue it doesn't work. checked that image it was just a plane. Anyone knows why that happen?? please help Thank you

-

Hello dear Computer grahics friends, I wish you all the best for this year, full of RBD destructions, magicals effects, cloth sims ... I start this year with a very boring subject as rendering octane render images using with PDG and HQUEUE on windows 10 My jobs are running successly even if at the end I don't have any output images. Log erros: *** OCTANE API MSG: Could not load 'Q:\RESSOURCES\TEXTURES\EnvMap\substance\sequence\substanceHDR.001.exr:rgba'. [Octane] 12:46:00 INFOR: [save image] -------- Saving the EXR "Q:/PROJECT/dev/04_3D/assets/Exterior/render_farm/MDL/work/houdini/scenes/Model/render/render_farm_Model_withOctaneNode_v001.Octane_ROP1.0001.exr" file *** OCTANE API MSG: OpenEXR: Cannot open image file "Q:\PROJECT\dev\04_3D\assets\Exterior\render_farm\MDL\work\houdini\scenes\Model\render\render_farm_Model_withOctaneNode_v001.Octane_ROP1.0001.exr". No such file or directory. Work around: If i switch manually all my paths from the server letter to the UNC path, evrything work fine. View with the sideFX support, I had HOUDINI_PATHMAP = {'Q:/': '//***.***.*.*/folder','Q:\\': '//**.***.*.**/folder'}. This additional variable fixed this issue to my "houdini nodes" but not for "octane render nodes" If somebody have any idea to fix this issue ? Best regards, Mathieu Negrel

-

Hello Evryone, Context: I am currently building a VFX pipeline based on "Rez" system for dynamic environnement resolution on Windows 10. My question is about the job submition on hqueue. Evrything work fine, except when my job is calculating, houdini can not find my textures or writing any bgeo on server, due to the windows server letter mapping. log error exemple: *** OCTANE API MSG: Could not load 'Q:\RESSOURCES\TEXTURES\EnvMap\substance\sequence\substanceHDR.011.exr:rgba'. if I change the map path to a UNC path, evrything work fine like this: '\\***.***.*.*\tutu\RESSOURCES\TEXTURES\EnvMap\substance\sequence\ where "\\***.***.*.*\tutu\" = "Q:" Work around: To fix this issue it looks like I have 2 solutions : Making a script who switch the windows letter path to a UNC path before sending the job -> brute force and not a very elegant way Using the default hqueue hda's and adding my custom variables -> look more natural way to me but I have no idea how it's work, i try to add a PYTHONPATH env, nothing changes, I try to changes the HQCOMMANDS, nothing changed ... Questions: Does anyone have experencing custom environement in hqueue or custom batch command using the default rop nodes, 'simulation' and 'render'? If somebody have any idea to fix the fact that hqueue can't read a path using letters (the hqserver.ini is configurated well I think) Thanks for you time. I really hope you have a solution, except to switch to linux :-) Best Regards Mathieu Rez: https://github.com/nerdvegas/rez

-

Hi ! Firstly i'd like to say that have an experience setting up and working with HQ (render, sim) Now i'm trying to set up PDG working on Hqueue but i've faced some strange issues I've set up everything and try to launch render. it fails output log says: C:/app/houdini/Houdini_18.0.460/python27/python2.7.exe: can't open file 'W:/3DCG/test_rs/proj_td/pdgtemp/21808/scripts/pdgmq.py': [Errno 2] No such file or directory Part of diagnostic information: Client Job Commands: ============================= Windows Command: "C:/app/houdini/Houdini_18.0.460/python27/python2.7.exe" "W:/3DCG/test_rs/proj_td/pdgtemp/21808/scripts/pdgmq.py" --xmlport 0 --relayport 0 --start But....this file (pdgmq.py) does exist and path is correct. even more : if i copy/paste this command line into cmd console - it works. pdgmq.py starts ok. and it's done under the same user as HQclient logs on. I have no idea where to dig further......

-

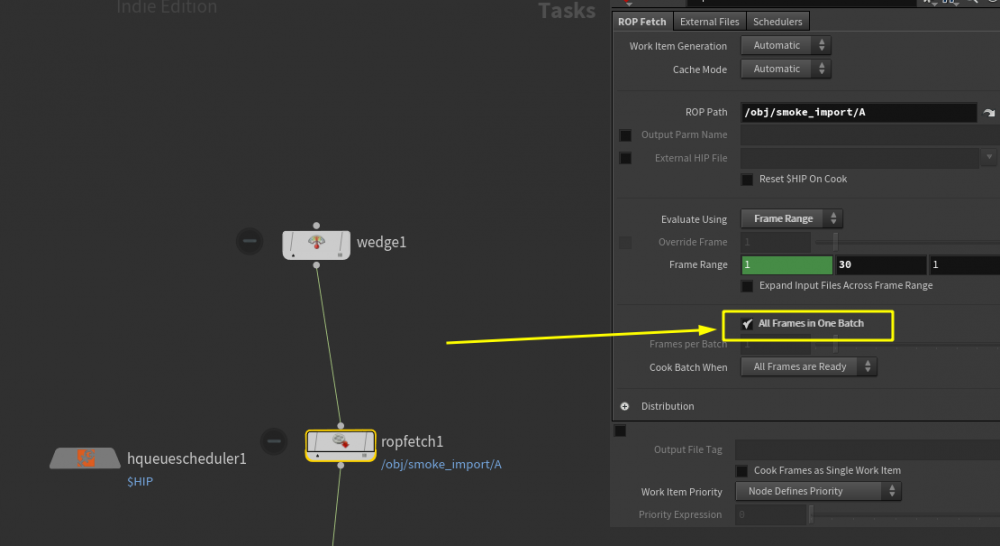

when I use the ROP Fetch in Topnet.I noticed that When I checked this option “All Frames in One Range”,and submit it to hqueue.It has some errors: So what's the deal with that?any advice,thanks. and can you achive simlation with hqueue in houdini 18?

-

Hi All ! Did anyone of you guys have Houdini 18 Hqueue issue? In my case, After houdini 18 installation, I decided to upgrade hqueue (from 17,5) to 18. The issue is : Everytime I submit a job to Hqueue, the IFD generation starts normally, till the last frame IFD is done and "master IFD generation job" shows me a "FAILED" status. After that, the clients assigned to render and maschines seem to be rendering. At the end of each frame the status of frame also turns to"FAILED". But when I check the render folder in the output directory, all renderings are done and look fine. Did anyone have a chance to encounter such kinda problem? Tried to downgrade back to 17,5, works good, as it should be. Upgrading also brings the upshown issue. I'll be glad of any help.

-

- houdini hqueue

- hqueue

-

(and 2 more)

Tagged with:

-

I have hqueue server and client installed and working on one machine. I am trying to add a second computer in a home network, as a client. when installing the client on the second computer i need to enter the server name and port number. I am not savvy with networking and do not know how to find this information. the houdini docs say to set this field to the machine hosting the hqueue server. I didn't know what to enter so i entered the ipv4 ip address of the first machine where i installed hqueue. and used the same port number 5000. this didn't succeed in adding the client to hqueue. Help would be appreciated. is there a way i can look up the correct server name info? thank you

-

Hi ! I've got hqueue farm set up on linux machines. i use it only for simulations Sometimes i get hip files for simulation that contain various number of operators which are not needed for sim (i.e. some custom ROPs, materials etc.) BUT ! when a client loads this hip file it warns that it can not load specific OTL and refuses to load hip. Let's say i have a scene with geo node that has reference to some Redshift material in its 'material' parameter. but i don't want to render this. i just want to simulate and write geometry to disk. But hqueue refuses to do that because it can not find redshift otls .... Is there a way to force houdini to ignore things like this....????? So you can not find otl!!!!....nevermind. just simulate...you do not need redshift to simulate dop network.... upd: when i connect to client via ssh and start hbatch in terminal - it works.....it loads file, claims that can not recognize node types...but simulation works when i start it with the command manually (render -V rop_node).. why hqueue can not do that and just fail the job ???

- 1 reply

-

- missing otls

- linux

-

(and 2 more)

Tagged with:

-

Hi ! I'm trying to render a job with frame increment of 0.25 ! But hqueue renders only in integer frames. Render on local machine from houdini works fine. I use $N variable to number rendered images . Is it possible to distribute fractional frames to hqueue farm ?

-

- hqueue

- float frame

-

(and 1 more)

Tagged with:

-

Howdy there!! Having an issue here. I'm caching geometry to be prepped for Custom Flip SIMMs. And I need sub-frames as Flip needs sub-frames for VDB volumes and caches of Geometry. I'm putting on LOCAL system and it works. But when I send them to server using HQueue it only replies the Frames and doesnot include any sub-frames. I've checked with all the available options but still nothing. Screenshots are here................... These two things I've tried in HQueue.... Any IDEAS... Thanks.

-

Hello, I'm looking to find out what off the shelf render farms people prefer currently for production; likes and dislikes? Short background: We need to use our nodes for Houdini, Max, Maya, fume, and a few other software. Currently about ~100 nodes, and we don't have a dedicated render farm technician so the most hands off, off the shelf is the best. I have experience with Hqueue, Rush, Deadline, and Qube, so I am looking for any additional off the shelf software, too beyond those. Thanks -Ben

-

Hey! I'm in a situation where I have cached a huge amount of points on disk and I want to render those points. What I do in the scene is reading the bgeo.sc caches, merge them all together, trail the points to calculate velocity, apply a pscale, assign a shader and render. If I run it on the farm with IFDs it takes a lot of time to generate the IFDs for render, and I'm trying to reduce the time of this task. I noticed that the IFD itself is tiny, the big part is that under the IFD folder there's a storage folder where basically I have a huge bgeo.sc file per frame which I suppose is the geometry I'm about to render. I wonder if all of this is not redundant since all those operation can be done at rendertime I suppose...I tried to set the file sop in "packed disk primitive" but it seems I cannot apply pscale after that, all the points render at pscale 1...

-

Hello, I've setup a hqueue farm across several machines with a shared drive. Almost everything seems to be working now (had lot's of intermittent issues, with jobs not making it to hqueue and 0%progress fails[think perhaps there was a license conflict]) Anyway, current problem is that I am unable to overwrite dependencies in the shared folder when I resubmit the hqrender node. As far as I can tell there are no permission issues and the files are not being used. I can read/delete/edit these files no problem from any of my clients/server machines(also fine/not corrupt in local directory). Any thoughts? log below OUTPUT LOG ......................... 00:00:00 144MB | [rop_operators] Registering operators ... 00:00:00 145MB | [rop_operators] operator registration done. 00:00:00 170MB | [vop_shaders] Registering shaders ... 00:00:00 172MB | [vop_shaders] shader registration done. 00:00:00 172MB | [htoa_op] End registration. 00:00:00 172MB | 00:00:00 172MB | releasing resources 00:00:00 172MB | Arnold shutdown Loading .hip file \\192.168.0.123\Cache/projects/testSubmit-2_deletenoChace_2.hip. PROGRESS: 0%ERROR: The attempted operation failed. Error: Unable to save geometry for: /obj/sphere1/file1 SOP Error: Unable to read file "//192.168.0.123/Cache/projects/geo/spinning_1.23.bgeo.sc". GeometryIO[hjson]: Unable to open file '//192.168.0.123/Cache/projects/geo/spinning_1.23.bgeo.sc' Backend IO Thanks in advance

-

Hello, I'd like to remove hqueue completely from mac OS, deleting the hqserver, hqclient and the files in Launch daemon isn't enough... when I try to reinstall it I have an internal server error, I suspect it happens because there are still parts of the hqueue remaining on the hard drive... There must be some terminal commands but I can't find anything in the doc about it and didn't have more chance with google. Thanks

-

Hello, I'm stucked, I think I managed to install and configure Hqueue correctly. This is what I do: -Plug a HQrender node after mantra's -Submit Job then Open HQueue The Job is submitted but after 3sec. it fails and the worst part is I don't know how to check what's wrong… If i click on the Job id number, it opens the Job details window but I can't see any “output log” to download. If I go in the Clients window, i can see that my Client machine is recognized with Avaibality set to Any @anytime. The server machine is a macpro 3,7 GHz Quad-Core Xeon E5, the client is an Imac 3,2 GHz Intel Core i3 Any idea where to start to resolve the problem? Thanks!!

-

Hello, we built an asset to cache and version our sims/caches I extended it to be able to use the renderfarm to sim things out. To do this I added a ROP in the asset, with the HQueue simulation node. I then use the topmost interface/parameters to 'click' on the 'Submit Job' button on that HQueue Simulation node down below. However, unless I do a 'allow editing of contents' on my HDA, it fails on the farm with the following code: ......................... ImportError: No module named sgtk Traceback (most recent call last): File "/our-hou-path/scripts/hqueue/hq_run_sim_without_slices.py", line 4, in <module> hqlib.callFunctionWithHQParms(hqlib.runSimulationWithoutSlices) File "/our-hou-path/linux/houdini/scripts/hqueue/hqlib.py", line 1862, in callFunctionWithHQParms return function(**kwargs) File "/our-hou-path/linux/houdini/scripts/hqueue/hqlib.py", line 1575, in runSimulationWithoutSlices alf_prog_parm.set(1) File "/hou-shared-path/shared/houdini_distros/hfs.linux-x86_64/houdini/python2.7libs/hou.py", line 34294, in set return _hou.Parm_set(*args) hou.PermissionError: Failed to modify node or parameter because of a permission error. Possible causes include locked assets, takes, product permissions or user specified permissions It seems that unless I don't unlock the asset, the submit job can't be clicked. Here's how i linked the interface button to the submit button Thanks for your input.

-

Hi guys, yet another HQueue problem. My team and I expanded our university's renderfarm and now we're at about 100 clients. Everything is working great, but the web interface of the HQueue was getting slower and slower with each client we added and is now it's nearly unusable... We've tried different browsers (chrome, firefox, internet explorer...) but nothing changed. Our setup is pretty much the one suggested by SESI in the help section, so the shared folder and the hqserver are two different machines. Do you guys know this problem, or maybe even have a solution for it? Thank you, Philipp

-

hello , i can send the project to the HQueueServer , and it can running , but can not render , the annex are error information .