Search the Community

Showing results for tags 'aov'.

-

Arnold HTOA: I’m putting my note here to create a motion blur AOV for packed instances. I do everything inside Houdini for lighting and rendering. I will describe my understanding of packed geo and packed fragments as well. Link to note: https://medium.com/@vupham_37726/arnold-houdini-motion-blur-aov-bfd5b0731a0f The Arnold version I used for this note has been updated to pick up any native or packed geo/fragment and generate motion blur for it, with no extra steps required for the procedural workflow using the Procedural Object Node, as in the previous version. HTOA 6.2.5.1 Arnold 7.2.5.1

-

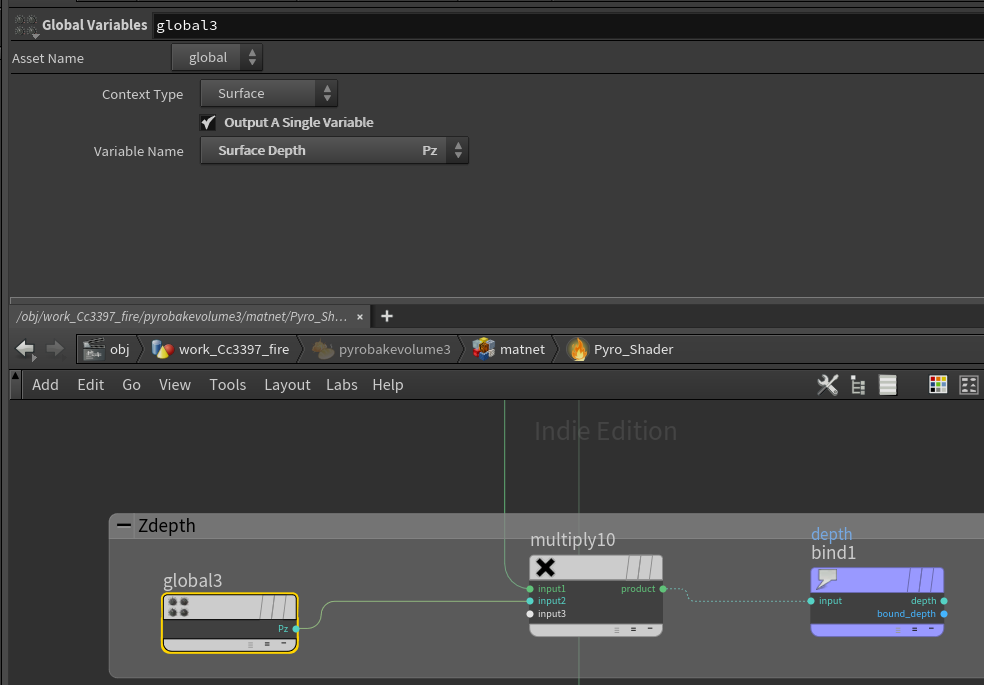

Hello. I have finally started using solaris+karma recently. I have a question about AOV rendering with karma. I would like to get depth when rendering pyro fire, any good or already established ideas? In Mantra's mat I was able to pick up the Pz from the Global node, but I don't know how to set it up in karma+solaris. Thank you in advance for your help!

-

Hey people, Quick and simple question : anyone figured out how to setup and render a custom aov / render channel in Vray for Houdini ? I've been trying things but I can't for the life of me find how to do it. I'm beginning to think it's just not possible, and that would spell doom for the renderer which is otherwise quite okay as far as I'm concerned.

-

Hello All This is my first post at odforce, so please let me know if I am missing any critical info or if this is the wrong sub-forum etc. I have recently been messing around with rendering out Position World AOVs (extra image planes) from Houdini's mantra node, for further use in nuke (for compositing). What I found was that when I would render out a position_world pass (P, camera position, transformed to world space) out of Houdini and examine it in nuke, the channel layers would be named (r, g, b). When I add the extra image plane N (Shading Normals, default option in mantra), the channel layer names are (x, y, z). So my question is: Is there a way to change the channel layer name of an Extra Image Plane in houdini? I specifically want to have the position_world pass to have the (x, y z) channel layer names if possible, or to at least know it's not possible and move on. For context, I set up a very simple setup to test with only a cube and a camera, and I transformed the P (camera position) to World Space and bound it to a variable (position_world), and referenced this to a mantra node extra image plane with filter "Closest Surface" and "minmax omedian). Again, if there is any missing information please let me know and I will do my best to reply! Thanks!

-

Hi, I've setup a simple scene with a grid and a material applyed to it and I've exported the roughness map to an AOV, I've checked the Luminance values and they are different from what I see in Nuke, do you know why this heppens? Mantra values around 0.92 Nuke Value around 0.87 I've put in the attachment both the scene and the map Roughness.zip

-

I need to get clean glowing Volume Lighting AOV in Redshift. First attached image is what I have in renderview - it's precisely what I want. Second one is what I get once I have my EXR. I dont need all this smoke. How do i fix it?

-

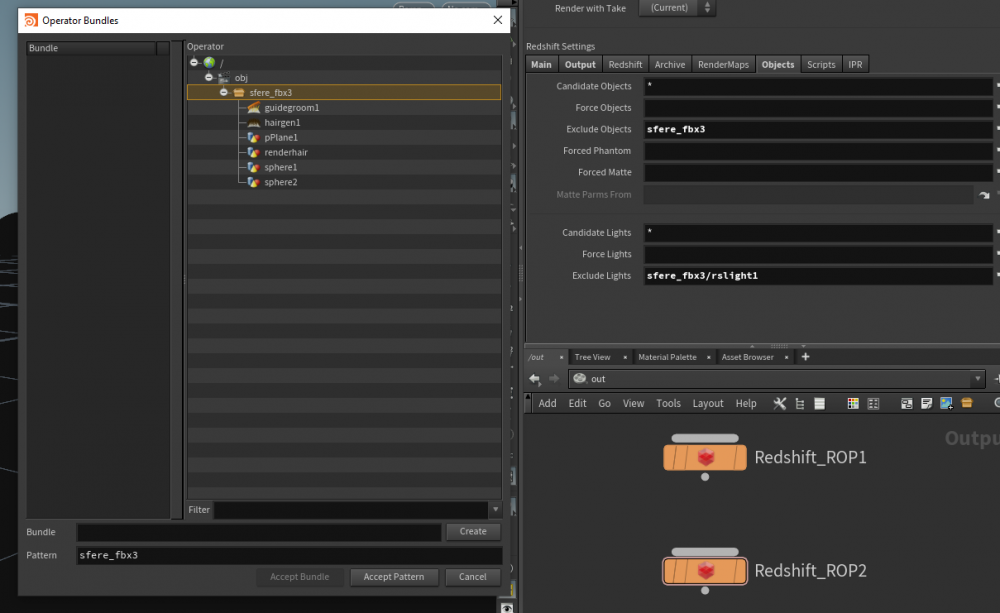

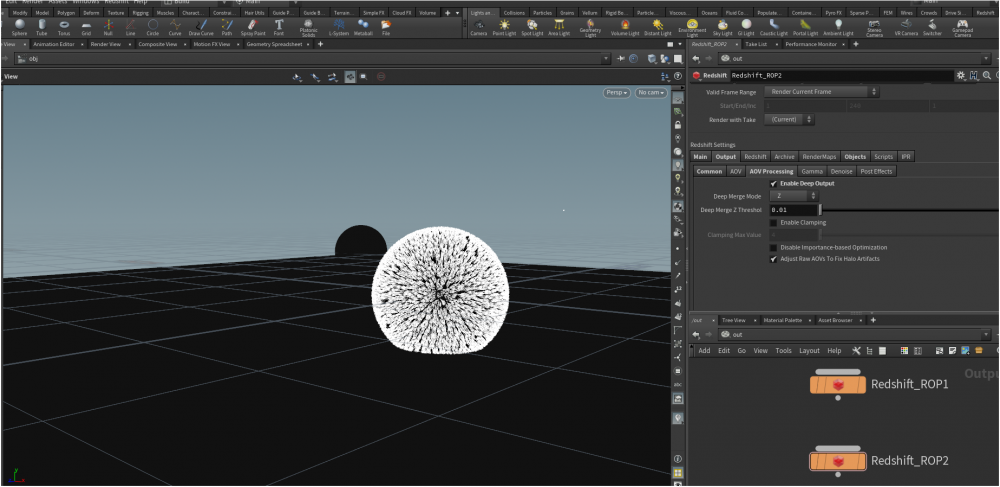

Hi, I'm trying to render the deep aov with redshift, but when I export it as an .exr in nuke through the deepread node, it says that I don't have any data regarding the deep layer, when I render the pass in Houdini it's just black, but it should somehow get the informations about the geometry location in viewport, am I missing something important related to the camera or the objects/matte exclusion in the redshift rop node?

-

Hi I'm trying to render Z depth for a smoke in Houdini via Redshift, it's look like working fine with object, but somehow not working with a Pyro smoke. It's look like Redshift doesn't "see" the volume, any idea why? Thank you

-

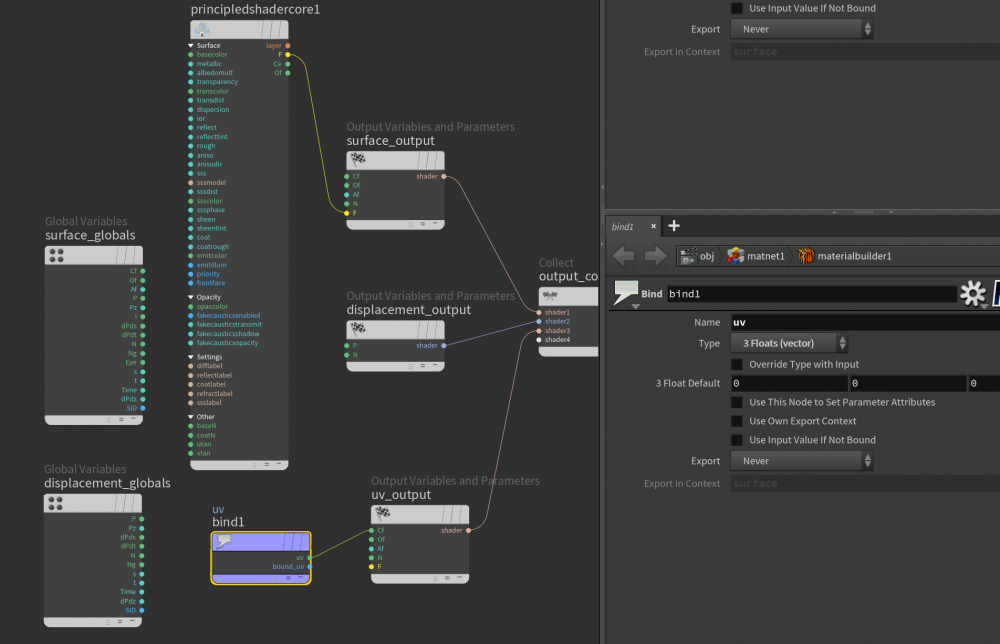

Is there a way to add a uv aov in a shader ? I've tried to bind uv to an output ut uv pass is black ? Any idea ? Thx untitled2.hipnc

-

I have a main volume that I want to render. however In that volume I would love to be able to merge other volumes into it and use those volumes as extra image planes. This means that my second volume should NOT show up in my beauty pass, but be available as an AOV. is this possible? Right now I have it setup so I render the second volume separately, but I wonder if the other thing could be done?

-

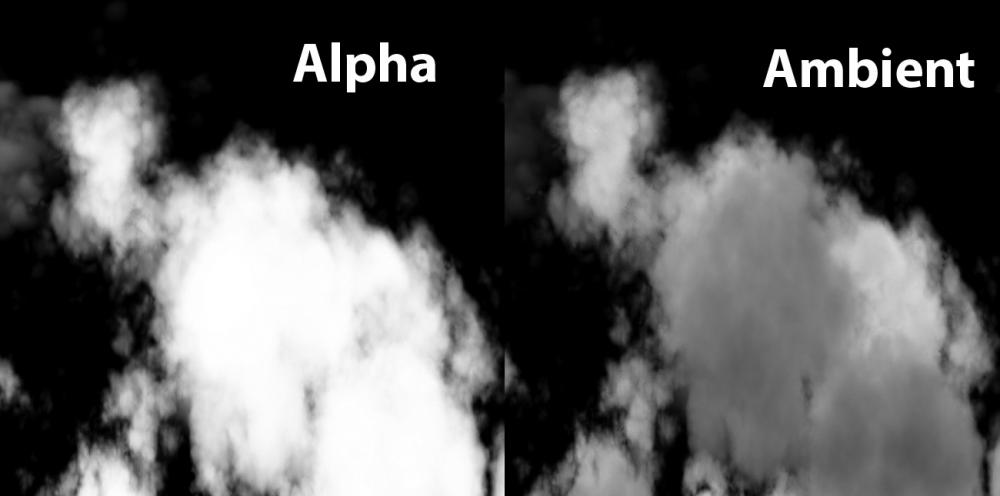

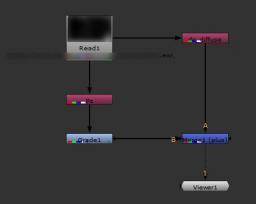

Hi, I want to render a custom pass for clouds in mantra. The reference jpg shows a custom pass from Maya (density / ambient) and the alpha for the same clouds. In Maya a gradient ramp was used on the incandescence channel. Basically where the clouds are denser should be black and the opposite, for lower density I should have white. Can I replicate that with mantra? I'm using the sky rig tool so everything is calculated at render time. Thank you!

-

Since new shading system matnetwork came out, do we a method to add AOVs without unlock a default shader, but can create it with combining a kind of layer-shader? If this is possible, it's so easy to add AOVs and no need to unlock shader could save file size, too. For instance, create a material builder and add AOVs like a particle RGB_mask in there, and create a sort of layer-combine shader, then connect the material builder shader and a principle shader into it, then output it to mantra.

-

I've run into this issue a few times now. I have a shader that generates several output variables for extra image planes. The variables that are generated would be really useful in SOPs. The only thing I can think of that would allow me to run the same calculation on each point, is to collapse the SHOP network into an OTL that I could also exist inside a VOP. But the parameters are being driven on the shader and it seems a bit messy to channel reference all the ramps and other parameters. Is there some way to apply the shop_materialpath and then compute and export a variable on a per point basis? Thanks!

-

Hi there, I'm having a hard time exporting some motion vectors out of Mantra. I used a method that was described in this forum. Two get blur nodes, one set to 0 and the other one to 1. Subtracted them and piped into a custom direction vector attribute which I used to render out an image plane in Mantra. Unfortunately I'm not getting any motion vector in the exr file. The image plane is black and when trying to use it with a vector blur in Nuke or a velocity blur in Houdini's comp area nothing happens.

-

Hi, I'm trying to render with objectID aov in Arnold. I haven't used AOVs yet, so I'm a bit confused and the arnold doc is very meeeh about that. Do I have to make shaders for the aovs ? How do I set an ID to an object, or a group ? I'm still trying out ... but any info would be great.

-

Hi guys, So have a explosion peice ive been working on working upto final render - now im looking for a way of efficiently seperating out each element (Base explosion, dust blast, shockwave, trails) - I would use deep but i know deep files get huge for volumes quickly and im not sure on how the houdini deep pipeline works. - I really dont want to add extra render time tbh, soo any handy or efficient tips? - thought about rendering each element seperately instead too rather than all together - but it would need the holdouts/influence off the other elements and tested this using force matte/phantom but couldnt get what i was after - would provide a hip file but i feel theirs no need this is more of a general how would you go about it? Will give me more control in comp and mean i can do breakdowns of the elements without rendering twice Thanks Chris

-

Is there any way to create object-based mantra AOVs (for object ID mattes) instead of having to go through the shader, like how vray for maya handles it where you can create an object property with an override object ID and you can throw multiple objects in it that will all inherit the same object ID? I'm referring to would would be object RGB object mattes in other software I know you can export custom AOVs inside a shader but for my workflow I have three issues with that- 1) if you are doing quick look-dev and iterating through multiple different shaders and you export that variable in one but quickly shift gears to a different look and forgot to include it in the new shader it won't render 2) If you want multiple objects to share the same object ID matte rather than having to annoyingly shuffle copy everything together in nuke you have to duplicate the same variables for each shader 3) and most importantly, and I don't really see a way around this, you need to unlock every shader HDA which is, as I have read, extremely innefficient in H15 as their load time and memory footprint are greatly optimized in their VEX HDA definition state Ideally this would be something you could implement at the SOP level in a wrangle or something Is there any way to quickly enable/disable all extra image planes like you can in maya/max/cinema/every other software, without manually unchecking every box? Then finally, when you export a float AOV and bring it in to nuke it basically reads it as an alpha channel; it won't be visible to a shuffle node as most AOVs would, you need to use a copy node to extract it. That isn't a huge deal except for I use a lot of python scripts that automate a lot of this and that breaks my workflow a bit. Any way around this? Any insight on this is greatly appreciated!

-

Hello everyone, I'm doing a destruction project where I need to isolate the front faces of bricks in a bullet sim so that I can export out a UV pass of only the front face to use in compositing. I know how to setup Mantra to export out an overall UV pass but I'm not sure how to isolate only the front faces of my bricks geo in the UV pass.

- 1 reply

-

- destruction

- uv

-

(and 2 more)

Tagged with:

-

Hello guys, I'm working on a scene with some atmospheric fog. The problem is that I can't find in which render layer the fog goes! It does appear in the beauty, but can't find it anywhere else. My theory is that the z-depth pass is used to create the fog in the beauty and thus I've tried to replicate the fog in comp using this theory. With success I might add. In Nuke if I shuffle out the z-depth, grade it and plus it with (for example) the diffuse (just quick and dirty) the same effect shows. Problem is that I can't recreate the exact same image (as if I would shuffle everything out and in again). I would love to create my own fog shader to output it to a render layer, but I don't have the knowhow. Also Houdini doesn't let me dive into the Z-Depth Fog node for examples. Does anyone know which render layer the fog ends up in? If not can someone give me a quick example of how to create my own simple fog shader? Thank you guys in advance, Russle P.S. Included a simple scene for review. Z-Depth.hip

-

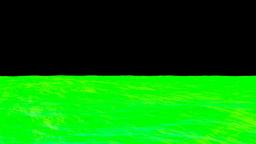

Hey guys!!! I’m playing with a large ocean render using Mantra PBR. In my scene i have a simple HDR environment light, and an Area light. The Area light is actually creating all the specular highlights in this render and shader is the default from ocean waves. I’m not sure what passes to render for composite.. Right now i’m rendering depth pass, normal pass and directreflection pass. I would really like to have the specular in a pass, and maybe some kind of motion vector pass, how do i achive this? Since i'm using the defualt shader, i'm guessing i just have to use the correct vex variables for output extra image planes? If that makes any sense.. And can you houdini experts perhaps recommend me some other passes to render for oceans and flip fluids? Any help is greatly appreciated, thanks!

-

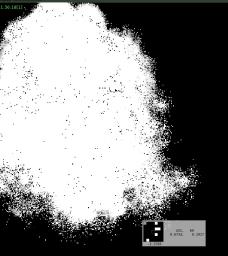

Hello everyone! I need temperature values render pass for some post compositing purposes from Pyro simulation. When I'm rendering it as "temperature" float 32 pass, I'm receiving negative values near the borders of volume. Why does it happening? Attaching picture. I can normalize this somehow after render, but I want to now why it is that way. There is dead end node "Fit Range Unclamped"(picture attached) in default shader(Fireball in this case). I can pass "temperature" parameter through some "fit ranges" or even "Reshape" node. But it doesn't answering the question Why? And I don't think that is the right way. Should say that remapping from shader tab from shades hasn't worked properly for me - even with clamping and fit set for 0 - 1 there was negative values and values bigger than 1. Basically, I need normalized (from 0 to 1) temperature render pass for my simulation. Maybe someone can help my with this? Will be much appreciate. Attaching 2 screenshots. Don't attaching .hip file because there is a simple sphere with Pyro Explosion shelf preset on it. P.S. Don't want to make another post right now, so should ask - is anybody know proper way to make a vectorfield texture from Pyro Sim in Houdini? Right now I'm using Volume Slice, rendering remapped normalized (from 0 to 1) motion vector pass from top orthographic view and compositing it in kind of flipbook, which is converted into volume texture dds next. Maybe there is another way to do it?

-

Hello, I have been trying some volumetrics with Arnold and they seem to work really fast. But I have an issue with recreating Beauty pass in compositing. Arnold can output 4 AOVs for volumes: volume (beauty), volume_direct, volume_indirect and volume_opacity. In my scene I am not using indirect lighting for volumes so volume_indirect is empty. Therefore volume (beauty) = volume_direct. But I don't know how to composite volume and volume_opacity AOVs as they both have RGB data. If volume_opacity had only one channel I could maybe set it as alpha for volume AOV. All AOVs in Arnold should be composited with additive operation (plus or screen) but it doesn't seem to work for volumes. Any ideas? Thanks

-

- AOV

- volume_opacity

-

(and 1 more)

Tagged with:

-

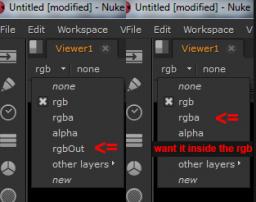

Hi all, In my ROP node I have set the extra image plane with "Different File" turned on. Gave it if a channel name like "rgbOut" and save it as open EXR ( Houdini 14). Viewing the extra image plane inside nuke, there is no information in my rgb channel. Mantra puts it into a separate channel. I can't seem to find a way to render extra images planes (aov with their own file directory and name) into their own rgb channel, like any other 3d package would do. If I don't give it a channel name it will simply use the parameter name as the channel name. I cant see the point of this, what is the work around for this? Cheers Gordon

-

Hi everyone! Have got some issue in illuminance loop(H14 Apprentice). Trying to export Cl(light color) parameter inside Illuminance loop. Doing this to obtain light color render passes for each light. So, seen in some videos - it's working for people but not for me. When I toggle For Each Light check box on, passes I receive is black and empty. Scene in attach. Could you please help me? I think it's basics and simple, but can't understand, what is going wrong. Would be well appreciate. Thanks for your attention. P.s. using visual builder, not a coder for a now. loop_issue.hipnc

-

- light pass

- aov

-

(and 1 more)

Tagged with:

-

Hi, I want to output a pass with the shadows that are casted on an object that is matte in my beauty render. Since I can't think of a way to force matte and phantom objects in each different image plane, I created a second mantra node to output just the shadow pass, which works fine. But is there a way to disable the primary beauty render on that second node, so I don't render unnecessary images? I just want the extra AOVs from that render node. thank you Georgios